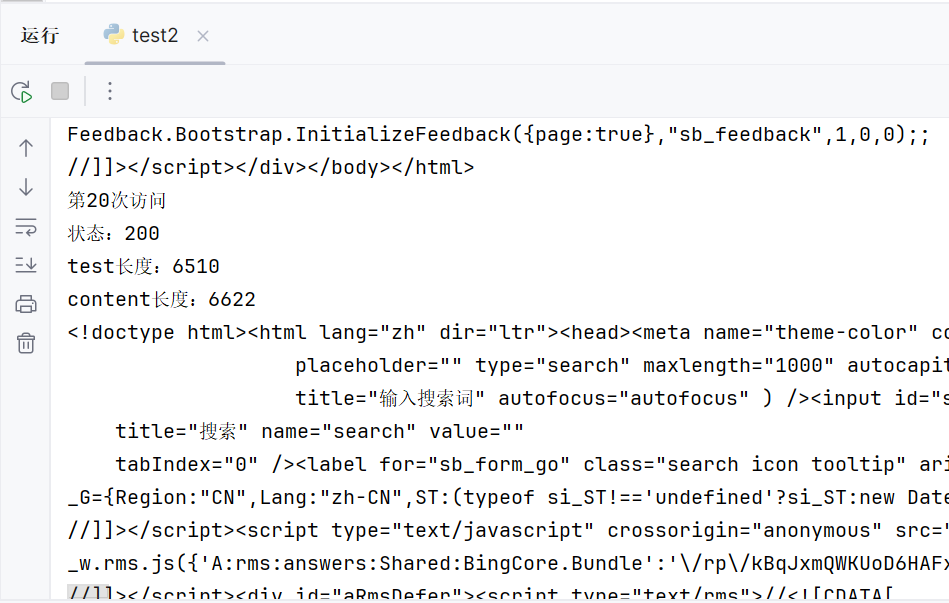

(2)请用requests库的get()函数访问如下一个网站20次,打印返回状态,text()内容,计算text()属性和content属性所返回网页内容的长度。(不同学号选做如下网页,必做及格)

import requests

url='https://www.bing.com'

for i in range(20):

r=requests.get(url)

print("第{}次访问".format(i+1))

print("状态:{}".format(r.status_code))

print("test长度:{}".format(len(r.text)))

print("content长度:{}".format(len(r.content)))

print(r.text)

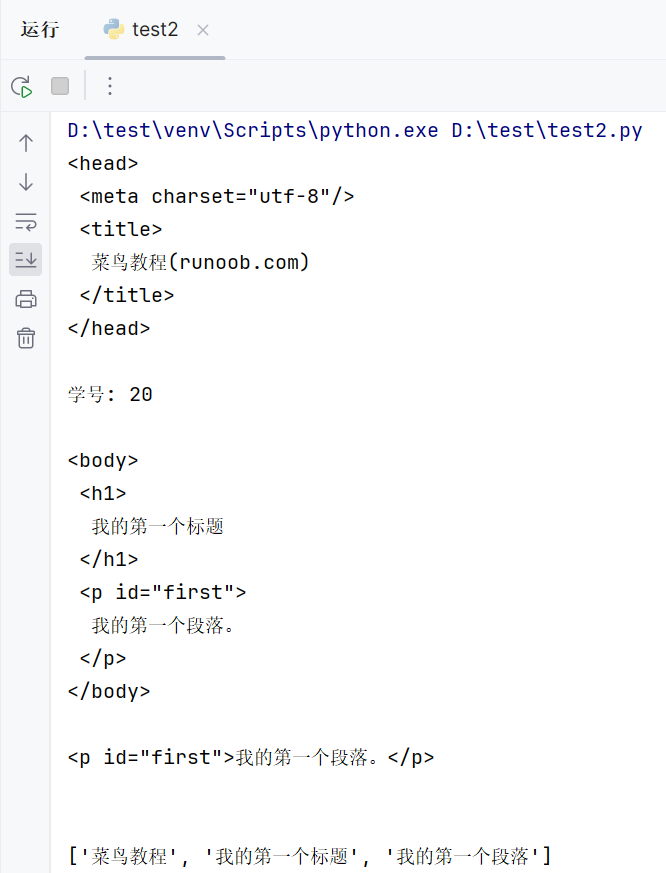

(3)这是一个简单的html页面,请保持为字符串,完成后面的计算要求。(良好)

要求:

a 打印head标签内容和你的学号后两位

b 获取body标签的内容

c 获取id 为first的标签对象

d 获取并打印html页面中的中文字符

from bs4 import BeautifulSoup

import re

html = """

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8">

<title>菜鸟教程(runoob.com)</title>

</head>

<body>

<h1>我的第一个标题</h1>

<p id="first">我的第一个段落。</p>

</body>

<table border="1">

<tr>

<td>row 1, cell 1</td>

<td>row 1, cell 2</td>

</tr>

<tr>

<td>row 2, cell 1</td>

<td>row 2, cell 2</td>

</tr>

</table>

</html>

"""

soup = BeautifulSoup(html, 'html')

print(soup.head.prettify())

print("学号: 20\n")

print(soup.body.prettify())

print(soup.find(id="first"))

print("\n")

s= re.compile(u'[\u4e00-\u9fff]+')

chinese=s.findall(html)

print(chinese)

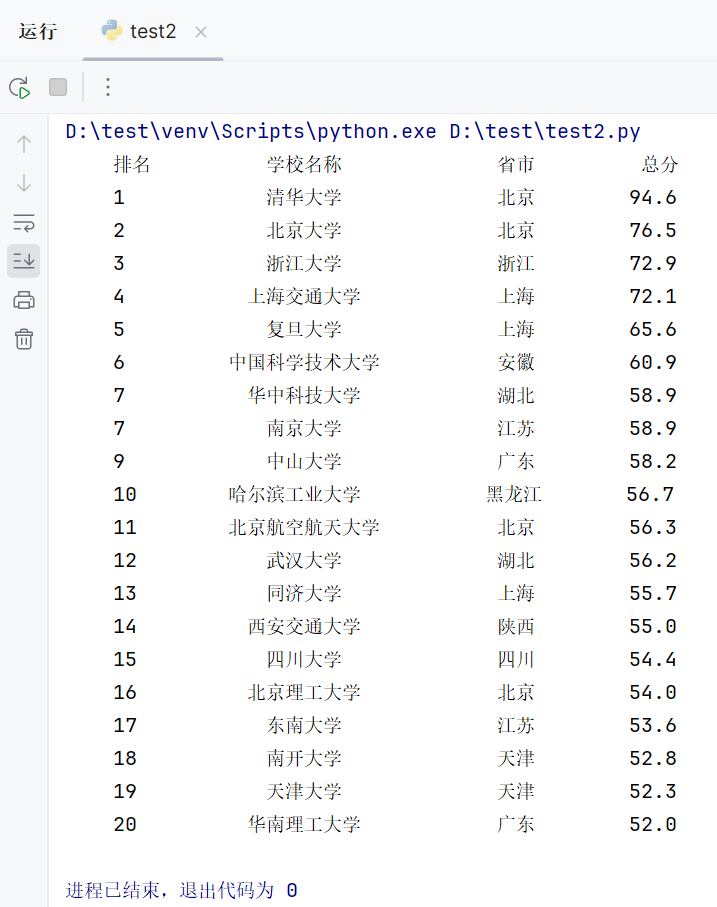

(4) 爬中国大学排名网站内容

https://www.shanghairanking.cn/rankings/bcur/201811

要求:

(一)爬取大学排名(学号尾号9,0,爬取年费2019

(二)把爬取得数据,存为csv文件

import requests

from bs4 import BeautifulSoup

import bs4

def getHTMLText(url) :

try:

r = requests.get(url,timeout = 30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

print("获取失败")

return ''

def fillUnivlist(ulist,html):

soup = BeautifulSoup(html,"html.parser")

for tr in soup.find('tbody').children:

if isinstance(tr,bs4.element.Tag):

tds = tr('td')

ulist.append([tds[0].string,tds[1].string,tds[2].string,tds[3].string])

pass

def printUnivlist(ulist,num):

print("{:^4}\t{:<15}\t{:<8}\t{:<8}".format("排名","学校名称","省市","总分"))

for i in range(num):

u = ulist[i]

print("{:^4}\t{:<15}\t{:<8}\t{:<8}".format(u[0],u[1],u[2],u[3]))

def main(num):

allUniv=[]

url="https://www.shanghairanking.cn/rankings/bcur/201911.html"

html = getHTMLText(url)

fillUnivlist(allUniv,html)

printUnivlist(allUniv,num)

main(20)

import csv

with open('example.csv', 'w', newline='') as file:

writer = csv.writer(file)

writer.writerow(["排名","学校名称","省市","总分"])

writer.writerows(data.values())