- Shannon Coding: using codeword lengths of \(\lceil \log\frac{1}{p_i}\rceil\)

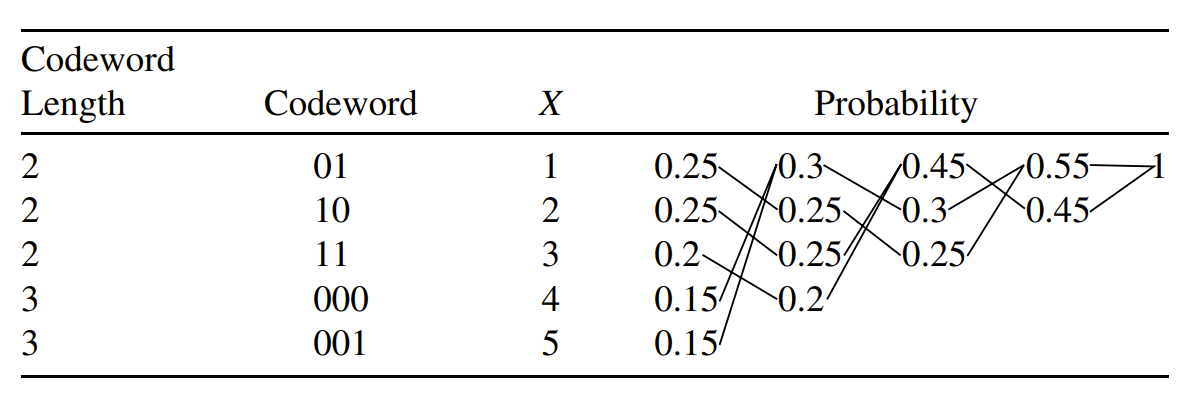

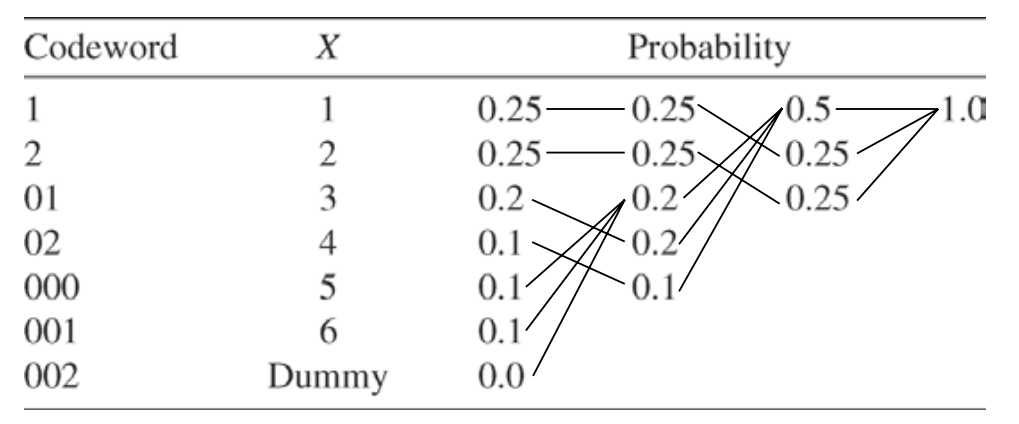

- Huffman Coding: combining the \(D\) least likely symbols into one symbol until we are finally left with only one symbol.

an example for \(D=2\):

an example for \(D=3\): if combine the symbols \(k\) times, the total number of symbols should be \(1+k(D-1)\), otherwise the \(D\)-ary alphabet is underutilized. So we add enough dummy symbols(with prob. zero).

-

Fano Coding: if \(D=2\)

-

order the symbols in decreasing order of their prob.s

-

choose \(k\) such that \(|\sum_{i=1}^k p_i -\sum_{i=k+1}^N p_i|\) is minimized

-

assign "0" to the first bit of the upper set (\(1 \rightarrow k\))

assign "1" to the first bit of the lowerset (\(k+1 \rightarrow N\))

-

repeat this process for each subset

-

an example for \(D=2\):

- Shnnon-Fano-Elias Coding:

section 5.9