- -为Softmax分类器实现完全矢量化的损失函数

- -实现解析梯度完全矢量化的表达式

- 使用数值梯度检查实现结果

- 使用验证集调整学习率和正则化强度

- 使用SGD优化损失函数

- 可视化最终学习的权重

softmax.ipynb

库、绘图设置和数据的导入和SVM一样

Train data shape: (49000, 3073) Train labels shape: (49000,) Validation data shape: (1000, 3073) Validation labels shape: (1000,) Test data shape: (1000, 3073) Test labels shape: (1000,) dev data shape: (500, 3073) dev labels shape: (500,)

Softmax Classifier

`cs231n/classifiers/softmax.py`

首先完成带嵌套循环的softmax_loss_naive

def softmax_loss_naive(W, X, y, reg):

# Initialize the loss and gradient to zero.

loss = 0.0

dW = np.zeros_like(W) #创建一个与W具有相同形状的全零数组。

N = X.shape[0]

for i in range(N):

score = X[i].dot(W) #长度为C?

exp_score = np.exp(score - np.max(score)) #防止溢出

loss += -np.log(exp_score[y[i]]/np.sum(exp_score)) / N #复刻公式

#loss += (-np.log(exp_score[y[i]])+ np.log(np.sum(exp_score))) / N #展开

dexp_score = np.zeros_like(exp_score)

dexp_score[y[i]] -= 1/exp_score[y[i]]/N

dexp_score += 1 /np.sum(exp_score) / N

dscore = dexp_score *exp_score

dW += X[[i]].T.dot([dscore])

loss +=reg*np.sum(W**2)

dW += 2*reg*W

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

return loss, dW

注意使用exp避免数值溢出之后要用本地梯度乘上游梯度得到梯度值。

向量化的softmax_loss_vectorized

def softmax_loss_vectorized(W, X, y, reg):

# Initialize the loss and gradient to zero.

loss = 0.0

dW = np.zeros_like(W)

#

scores = X.dot(W)

#exp_score = np.exp(score - np.max(score))

scores -= np.max(scores, axis=1, keepdims=True)#保持dim

exp_scores = np.exp(scores)

probs = exp_scores / np.sum(exp_scores, axis=1, keepdims=True)

# Compute the loss

N = X.shape[0] #有点不熟悉这个维度012的顺序

loss = np.sum(-np.log(probs[np.arange(N), y])) / N

loss += reg * np.sum(W * W) #正则化强度的系数其实无所谓?只要不太小应该效果都差不多

# Compute the gradient

dscores = probs

dscores[np.arange(N), y] -= 1

dscores /= N

dW = X.T.dot(dscores)

dW += reg * W

return loss, dW

超参数调试

# Use the validation set to tune hyperparameters (regularization strength and

# learning rate). You should experiment with different ranges for the learning

# rates and regularization strengths; if you are careful you should be able to

# get a classification accuracy of over 0.35 on the validation set.

from cs231n.classifiers import Softmax

results = {}

best_val = -1

best_softmax = None

################################################################################

# TODO: #

# Use the validation set to set the learning rate and regularization strength. #

# This should be identical to the validation that you did for the SVM; save #

# the best trained softmax classifer in best_softmax. #

################################################################################

# Provided as a reference. You may or may not want to change these hyperparameters

learning_rates = [3e-7,4e-7,5e-7]

regularization_strengths = [0.5e4, 1e4,1.5e4,2e4]

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

# Iterate over all hyperparameter combinations

for lr in learning_rates:

for reg in regularization_strengths:

# Create a new Softmax classifier

softmax = Softmax()

# Train the classifier on the training set

softmax.train(X_train, y_train, learning_rate=lr, reg=reg, num_iters=1000)

# Evaluate the classifier on the training and validation sets

train_accuracy = np.mean(softmax.predict(X_train) == y_train)

val_accuracy = np.mean(softmax.predict(X_val) == y_val)

# Save the results for this hyperparameter combination

results[(lr, reg)] = (train_accuracy, val_accuracy)

# Update the best validation accuracy and best classifier

if val_accuracy > best_val:

best_val = val_accuracy

best_softmax = softmax

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

# Print out results.

for lr, reg in sorted(results):

train_accuracy, val_accuracy = results[(lr, reg)]

print('lr %e reg %e train accuracy: %f val accuracy: %f' % (

lr, reg, train_accuracy, val_accuracy))

print('best validation accuracy achieved during cross-validation: %f' % best_val)

目前调出来比较好一点的是

lr 5.000000e-07 reg 5.000000e+03 train accuracy: 0.386000 val accuracy: 0.392000

最后看看在test上的准确率

# evaluate on test set

# Evaluate the best softmax on test set

y_test_pred = best_softmax.predict(X_test)

test_accuracy = np.mean(y_test == y_test_pred)

print('softmax on raw pixels final test set accuracy: %f' % (test_accuracy, ))

softmax on raw pixels final test set accuracy: 0.384000

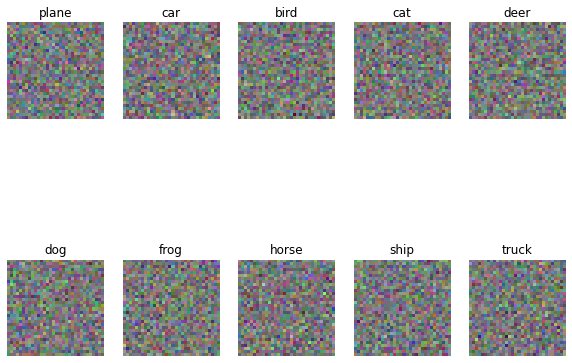

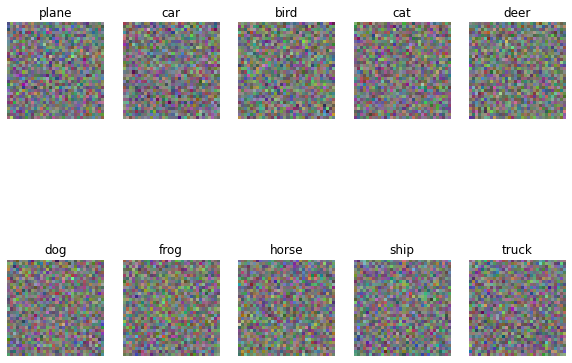

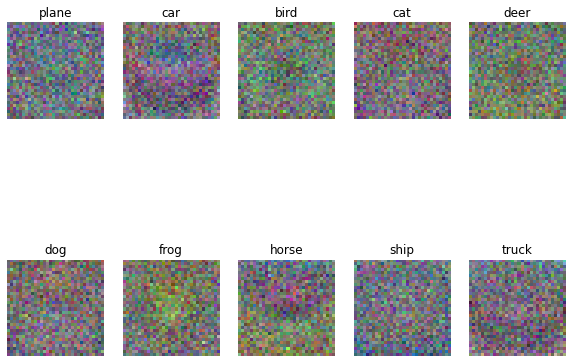

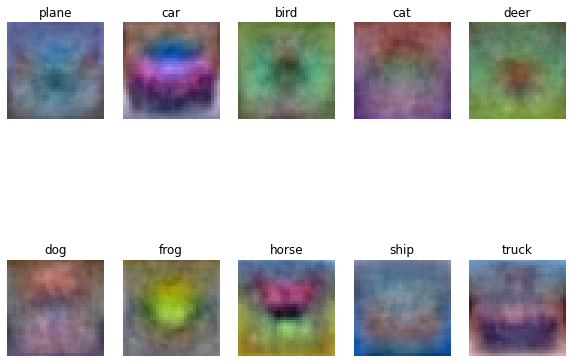

对比一下不同步数的权重图像差异

|

|

|

|

| 100 | 500 | 1000 |

|

|

|

|

| 1500 | 3000 | 5000 |

(多整了一些)噪点的减少还是非常明显的,虽然1500之后准确率没太大区别