Hadoop完全分布式集群安装

使用版本: hadoop-3.2.0

安装VMware

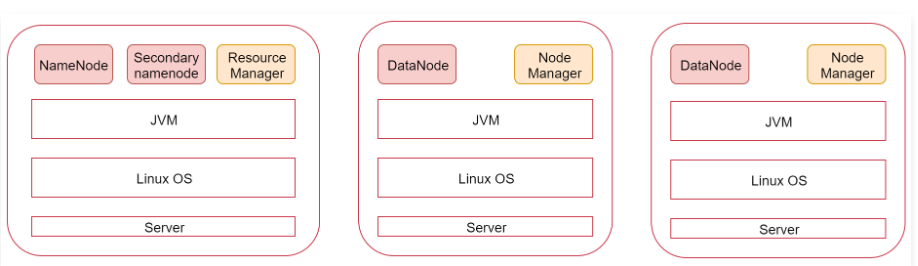

看一下这张图,图里面表示是三个节点,左边这一个是主节点,右边的两个是从节点,hadoop集群是支持主从架构的。

不同节点上面启动的进程默认是不一样的。

下面我们就根据图中的规划实现一个一主两从的hadoop集群

安装hadoop

三个节点

bigdata01 192.168.182.100

bigdata02 192.168.182.101

bigdata03 192.168.182.102

环境准备

ip:

设置静态ip

[root@bigdata01 ~]# vi /etc/sysconfig/network-scripts/ifcfg-ens33

TYPE="Ethernet"

PROXY_METHOD="none"

BROWSER_ONLY="no"

BOOTPROTO="static"

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="ens33"

UUID="9a0df9ec-a85f-40bd-9362-ebe134b7a100"

DEVICE="ens33"

ONBOOT="yes"

IPADDR=192.168.182.100

GATEWAY=192.168.182.2

DNS1=192.168.182.2

[root@bigdata01 ~]# service network restart

Restarting network (via systemctl): [ OK ]

[root@bigdata01 ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:9c:86:11 brd ff:ff:ff:ff:ff:ff

inet 192.168.182.100/24 brd 192.168.182.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::c8a8:4edb:db7b:af53/64 scope link noprefixroute

valid_lft forever preferred_lft forever

hostname

[root@bigdata01 ~]# hostname bigdata01

[root@bigdata01 ~]# vi /etc/hostname

bigdata01

[root@bigdata01 ~]# vi /etc/hosts

192.168.182.100 bigdata01

firewalld

[root@bigdata01 ~]# systemctl stop firewalld

[root@bigdata01 ~]# systemctl disable firewalld

配置/etc/hosts

因为需要在主节点远程连接两个从节点,所以需要让主节点能够识别从节点的主机名,使用主机名远程访问,默认情况下只能使用ip远程访问,想要使用主机名远程访问的话需要在节点的/etc/hosts文件中配置对应机器的ip和主机名信息。

所以在这里我们就需要在bigdata01的/etc/hosts文件中配置下面信息,最好把当前节点信息也配置到里面,这样这个文件中的内容就通用了,可以直接拷贝到另外两个从节点

[root@bigdata01 ~]# vi /etc/hosts

192.168.182.100 bigdata01

192.168.182.101 bigdata02

192.168.182.102 bigdata03

[root@bigdata02 ~]# vi /etc/hosts

192.168.182.100 bigdata01

192.168.182.101 bigdata02

192.168.182.102 bigdata03

[root@bigdata03 ~]# vi /etc/hosts

192.168.182.100 bigdata01

192.168.182.101 bigdata02

192.168.182.102 bigdata03

ssh免密登录

首先在bigdata01机器上执行下面命令,将公钥信息拷贝到两个从节点

[root@bigdata01 ~]# scp ~/.ssh/authorized_keys bigdata02:~/

The authenticity of host 'bigdata02 (192.168.182.101)' can't be established.

ECDSA key fingerprint is SHA256:uUG2QrWRlzXcwfv6GUot9DVs9c+iFugZ7FhR89m2S00.

ECDSA key fingerprint is MD5:82:9d:01:51:06:a7:14:24:a9:16:3d:a1:5e:6d:0d:16.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'bigdata02,192.168.182.101' (ECDSA) to the list of known hosts.

root@bigdata02's password:

authorized_keys 100% 396 506.3KB/s 00:00

[root@bigdata01 ~]# scp ~/.ssh/authorized_keys bigdata03:~/

The authenticity of host 'bigdata03 (192.168.182.102)' can't be established.

ECDSA key fingerprint is SHA256:uUG2QrWRlzXcwfv6GUot9DVs9c+iFugZ7FhR89m2S00.

ECDSA key fingerprint is MD5:82:9d:01:51:06:a7:14:24:a9:16:3d:a1:5e:6d:0d:16.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'bigdata03,192.168.182.102' (ECDSA) to the list of known hosts.

root@bigdata03's password:

authorized_keys 100% 396 606.1KB/s 00:00

然后在bigdata02和bigdata03上执行

bigdata02:

[root@bigdata02 ~]# cat ~/authorized_keys >> ~/.ssh/authorized_keys

bigdata03:

[root@bigdata03 ~]# cat ~/authorized_keys >> ~/.ssh/authorized_keys

验证一下效果,在bigdata01节点上使用ssh远程连接两个从节点,如果不需要输入密码就表示是成功的,此时主机点可以免密码登录到所有节点。

[root@bigdata01 ~]# ssh bigdata02

Last login: Tue Apr 7 21:33:58 2020 from bigdata01

[root@bigdata02 ~]# exit

logout

Connection to bigdata02 closed.

[root@bigdata01 ~]# ssh bigdata03

Last login: Tue Apr 7 21:17:30 2020 from 192.168.182.1

[root@bigdata03 ~]# exit

logout

Connection to bigdata03 closed.

[root@bigdata01 ~]#

JDK配置

集群间时间同步

集群只要涉及到多个节点的就需要对这些节点做时间同步

首先在bigdata01节点上操作

使用ntpdate -u ntp.sjtu.edu.cn实现时间同步,但是执行的时候提示找不到ntpdata命令

默认是没有ntpdate命令的,需要使用yum在线安装,执行命令 yum install -y ntpdate

[root@bigdata01 ~]# yum install -y ntpdate

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.cn99.com

* extras: mirrors.cn99.com

* updates: mirrors.cn99.com

base | 3.6 kB 00:00

extras | 2.9 kB 00:00

updates | 2.9 kB 00:00

Resolving Dependencies

--> Running transaction check

---> Package ntpdate.x86_64 0:4.2.6p5-29.el7.centos will be installed

--> Finished Dependency Resolution

Dependencies Resolved

===============================================================================

Package Arch Version Repository Size

===============================================================================

Installing:

ntpdate x86_64 4.2.6p5-29.el7.centos base 86 k

Transaction Summary

===============================================================================

Install 1 Package

Total download size: 86 k

Installed size: 121 k

Downloading packages:

ntpdate-4.2.6p5-29.el7.centos.x86_64.rpm | 86 kB 00:00

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : ntpdate-4.2.6p5-29.el7.centos.x86_64 1/1

Verifying : ntpdate-4.2.6p5-29.el7.centos.x86_64 1/1

Installed:

ntpdate.x86_64 0:4.2.6p5-29.el7.centos

Complete!

然后手动执行ntpdate -u ntp.sjtu.edu.cn 确认是否可以正常执行

[root@bigdata01 ~]# ntpdate -u ntp.sjtu.edu.cn

7 Apr 21:21:01 ntpdate[5447]: step time server 185.255.55.20 offset 6.252298 sec

建议把这个同步时间的操作添加到linux的crontab定时器中,每分钟执行一次

[root@bigdata01 ~]# vi /etc/crontab

* * * * * root /usr/sbin/ntpdate -u ntp.sjtu.edu.cn