一、Kubernetes里的DNS

K8S集群默认使用CoreDNS作为DNS服务:

# kubectl get svc -n kube-system |grep dns

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 24d测试在node-1-231 安装bind-utils

yum install -y bind-utils

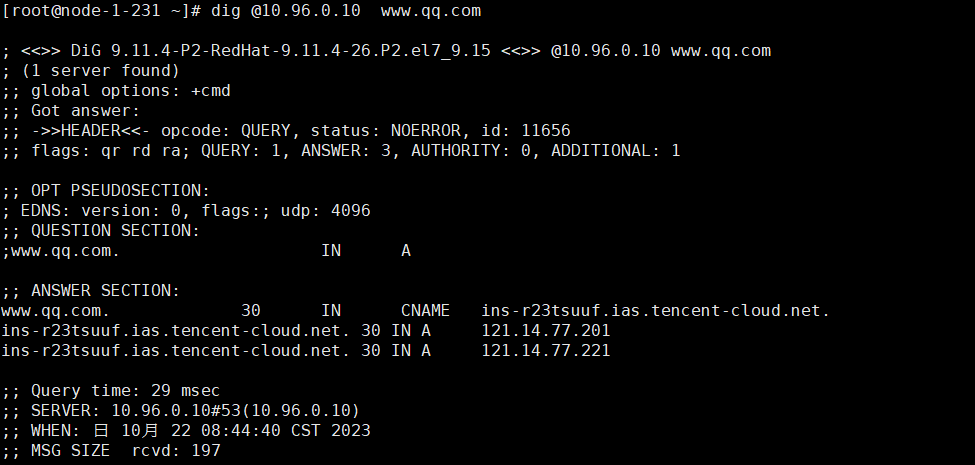

解析外网域名

dig @10.96.0.10 www.qq.com

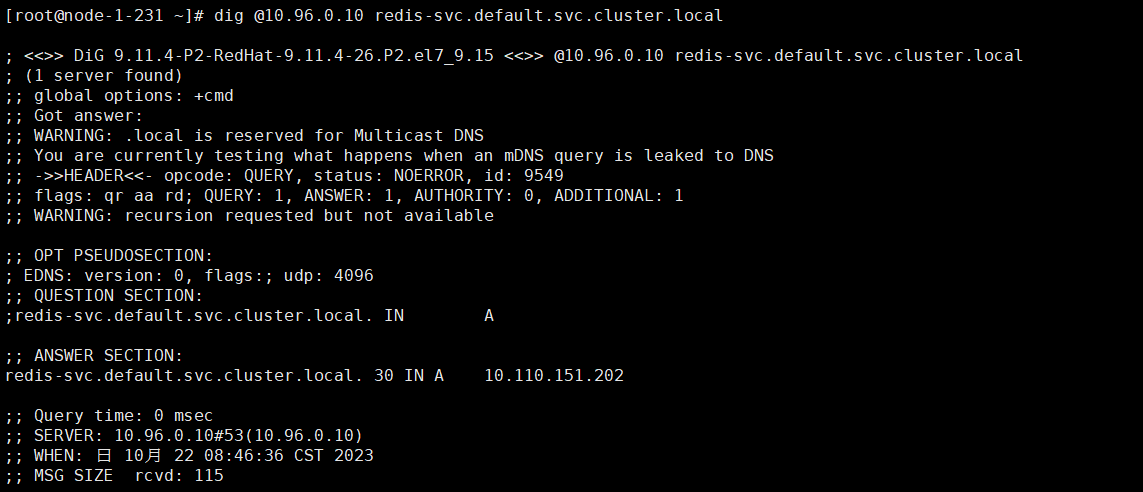

解析内部域名

dig @10.96.0.10 redis-svc.default.svc.cluster.local

# kubectl get svc |grep redis

redis-svc ClusterIP 10.110.151.202 <none> 6379/TCP 6d8h

备注:redis-svc为service name,service完整域名格式为:service.namespace.svc.cluster.local

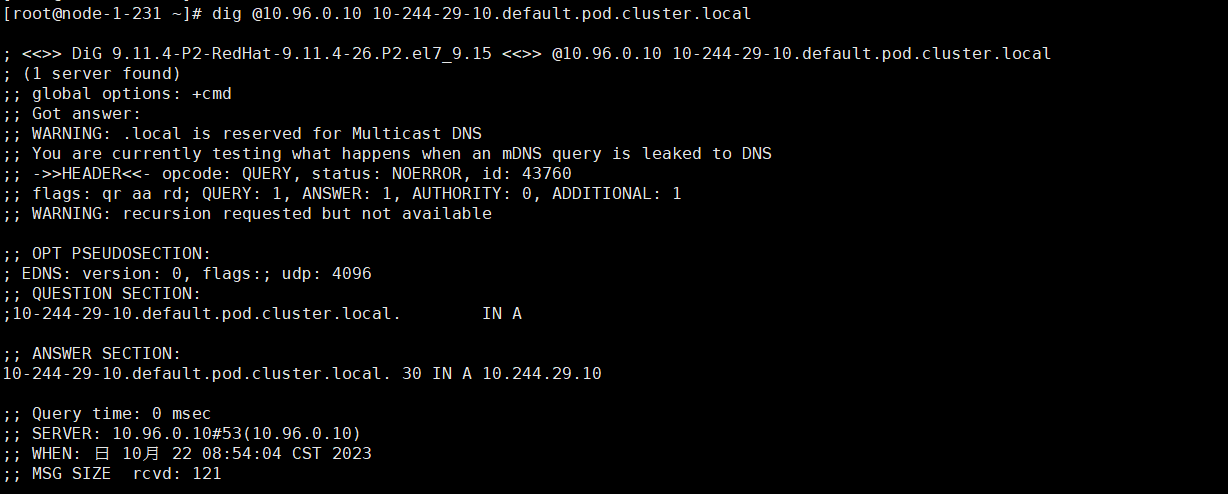

Pod域名格式为:<pod-ip>.<namespace>.pod.<clusterdomain>,例如:redis-sts-0.default.pod.cluster.local

dig @10.96.0.10 10-244-29-10.default.pod.cluster.local

# kubectl get pod -A -o wide |grep redis

default redis-sts-0 1/1 Running 4 (24m ago) 5d12h 10.244.29.10 node-1-232 <none> <none>

default redis-sts-1 1/1 Running 4 (23m ago) 5d12h 10.244.154.11 node-1-233 <none> <none>

对应的Pod为CoreDNS:

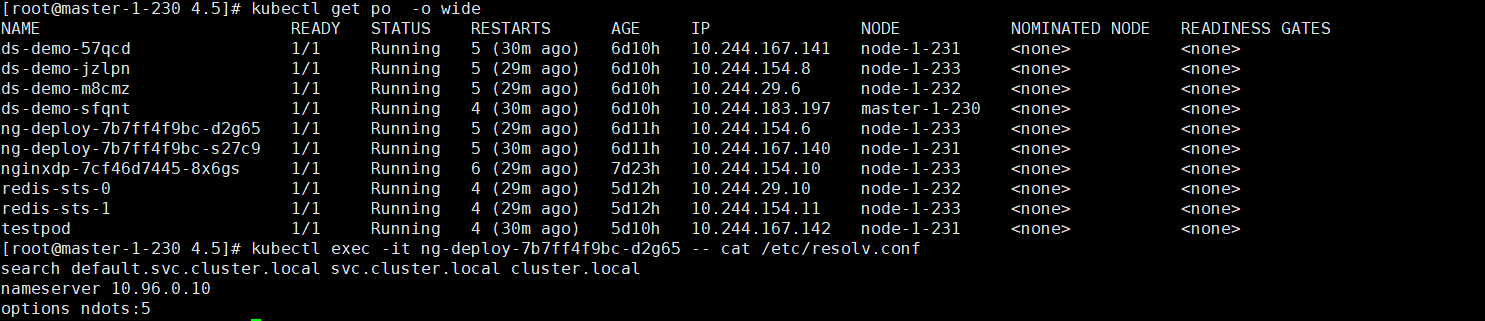

kubectl get po -n kube-system -o wide查看defalut命名空间Pod的/etc/resolv.conf

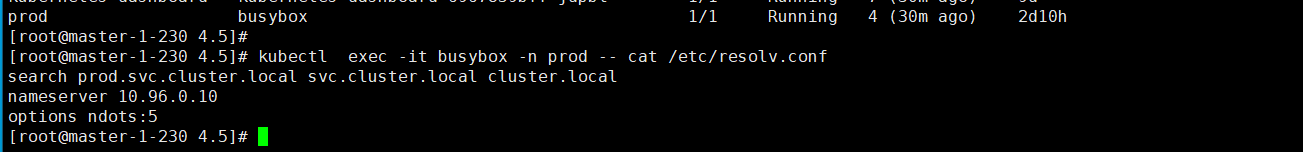

查看prod命名空间Pod的/etc/resolv.conf

kubectl exec -it busybox -n prod -- cat /etc/resolv.conf

解释:

- nameserver:定义DNS服务器的IP,其实是kube-dns service的IP

- search:定义查找后缀规则,查找配置越多,域名解析查找匹配次数越多。集群匹配有default.svc.cluster.local、svc.cluster.local、cluster.local 3 个后缀,最多进行8次查询(IPV4和IPV6各查询4次)才能得到正确解析结果。

- option: 定义域名解析配置文件选项,支持对个KV值。例如该参数设置成ndots:5,如果访问的域名字符串的点字符数量超过ndots值,则认为是完整域名,并直接解析;如果不足ndots值,则追加search段后缀再进行查询。

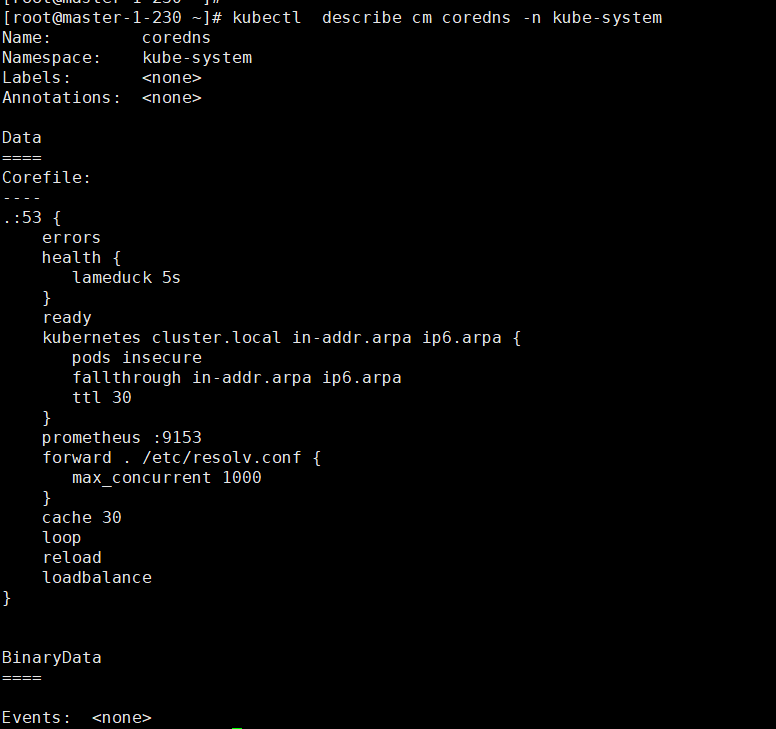

DNS配置 可以通过查看coredns 的configmap获取DNS的配置信息:

kubectl describe cm coredns -n kube-system

说明

-

errors:错误信息到标准输出。

-

health:CoreDNS自身健康状态报告,默认监听端口8080,一般用来做健康检查。您可以通过http://10.18.206.207:8080/health获取健康状态。(10.18.206.207为coredns其中一个Pod的IP)

-

ready:CoreDNS插件状态报告,默认监听端口8181,一般用来做可读性检查。可以通过http://10.18.206.207:8181/ready获取可读状态。当所有插件都运行后,ready状态为200。

-

kubernetes:CoreDNS kubernetes插件,提供集群内服务解析能力。

-

prometheus:CoreDNS自身metrics数据接口。可以通过http://10.15.0.10:9153/metrics获取prometheus格式的监控数据。(10.15.0.10为kube-dns service的IP)

-

forward(或proxy):将域名查询请求转到预定义的DNS服务器。默认配置中,当域名不在kubernetes域时,将请求转发到预定义的解析器(宿主机的/etc/resolv.conf)中,这是默认配置。

-

cache:DNS缓存时长,单位秒。

-

loop:环路检测,如果检测到环路,则停止CoreDNS。

-

reload:允许自动重新加载已更改的Corefile。编辑ConfigMap配置后,请等待两分钟以使更改生效。

-

loadbalance:循环DNS负载均衡器,可以在答案中随机A、AAAA、MX记录的顺序。

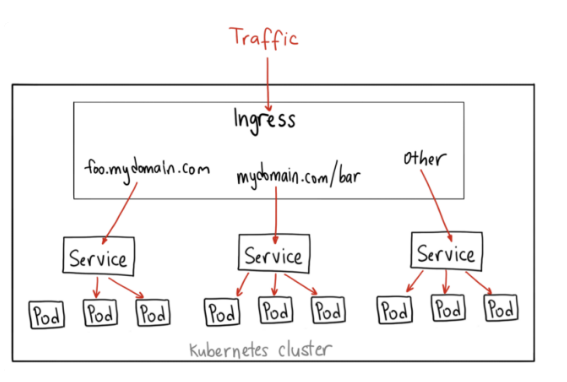

二、API资源对象ingress

有了service之后,我们可以访问这个Service的IP(clusterIP)来请求对应的Pod,但是这只能在集群内部访问。

如果想让外部用户访问此资源,可以使用NodePort,即在node节点上暴露一个端口,但是非常不灵活。为了解决此问题,K8S引入一个新的API资源对象Ingress,他是一个七层的负载均衡器,类似于Nginx。

主要概念:

- Ingress用来定义具体的路由规则,要实现什么样的访问效果

- Ingress Controller 是实现Ingress定义具体的具体工具或者服务,在K8S就是具体的Pod

- IngressClass是介于Ingress 和Ingress Controller之间的协调者,它存在的意义在于,当有多个Ingress Controller时,可以让Ingress 和Ingress Controller彼此对立,不直接联系,而是通过IngressClass实现关联

Ingress YAML 示例:

vim mying.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: mying ##ingress名字

spec:

ingressClassName: myingc ##定义关联的IngressClass

rules: ##定义具体的规则

- host: ikubernetes.cloud ##访问的目标域名

http:

paths:

- path: /

pathType: Exact

backend: ##定义后端的service对象

service:

name: ngx-svc

port:

number: 80查看ingress

# kubectl apply -f mying.yaml

ingress.networking.k8s.io/mying created

# kubectl get ing

NAME CLASS HOSTS ADDRESS PORTS AGE

mying myingc ikubernetes.cloud 80 16s

# kubectl describe ing mying

Name: mying

Labels: <none>

Namespace: default

Address:

Ingress Class: myingc

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

ikubernetes.cloud

/ ngx-svc:80 (10.244.154.6:80,10.244.167.140:80)

Annotations: <none>

Events: <none>Ingress Class YAML 示例

vim myingc.yaml

apiVersion: networking.k8s.io/v1

kind: IngressClass

metadata:

name: myingc

spec:

controller: nginx.org/ingress-controller ##定义要使用哪个controller查看ingressClass

# kubectl apply -f myingc.yaml

ingressclass.networking.k8s.io/myingc created

# kubectl get ingressclass

NAME CONTROLLER PARAMETERS AGE

myingc nginx.org/ingress-controller <none> 9s安装ingress-controller,官网地址:https://github.com/nginxinc/kubernetes-ingress

安装ingress

# curl -O 'https://gitee.com/aminglinux/linux_study/raw/master/k8s/ingress.tar.gz'

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 9434 0 9434 0 0 17182 0 --:--:-- --:--:-- --:--:-- 17183

# tar zxf ingress.tar.gz

# cd ingress

# ll

总用量 8

drwxr-xr-x 3 root root 99 11月 22 2022 common

drwxr-xr-x 2 root root 23 11月 22 2022 rbac

-rwxr-xr-x 1 root root 191 11月 22 2022 remove.sh

-rwxr-xr-x 1 root root 130 11月 22 2022 setup.sh

# ./setup.sh

namespace/nginx-ingress created

serviceaccount/nginx-ingress created

clusterrole.rbac.authorization.k8s.io/nginx-ingress created

clusterrolebinding.rbac.authorization.k8s.io/nginx-ingress created

secret/default-server-secret created

configmap/nginx-config created

namespace/nginx-ingress unchanged

serviceaccount/nginx-ingress unchanged

customresourcedefinition.apiextensions.k8s.io/globalconfigurations.k8s.nginx.org created

customresourcedefinition.apiextensions.k8s.io/policies.k8s.nginx.org created

customresourcedefinition.apiextensions.k8s.io/transportservers.k8s.nginx.org created

customresourcedefinition.apiextensions.k8s.io/virtualserverroutes.k8s.nginx.org created

customresourcedefinition.apiextensions.k8s.io/virtualservers.k8s.nginx.org createdvi ingress-controller.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: ngx-ing

namespace: nginx-ingress

spec:

replicas: 1

selector:

matchLabels:

app: ngx-ing

template:

metadata:

labels:

app: ngx-ing

#annotations:

#prometheus.io/scrape: "true"

#prometheus.io/port: "9113"

#prometheus.io/scheme: http

spec:

serviceAccountName: nginx-ingress

containers:

- image: nginx/nginx-ingress:2.2-alpine

imagePullPolicy: IfNotPresent

name: ngx-ing

ports:

- name: http

containerPort: 80

- name: https

containerPort: 443

- name: readiness-port

containerPort: 8081

- name: prometheus

containerPort: 9113

readinessProbe:

httpGet:

path: /nginx-ready

port: readiness-port

periodSeconds: 1

securityContext:

allowPrivilegeEscalation: true

runAsUser: 101 #nginx

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

args:

- -ingress-class=myingc

- -health-status

- -ready-status

- -nginx-status

- -nginx-configmaps=$(POD_NAMESPACE)/nginx-config

- -default-server-tls-secret=$(POD_NAMESPACE)/default-server-secret应用YAML

# kubectl apply -f ingress-controller.yaml

deployment.apps/ngx-ing created查看Pod、deployment

# kubectl get po -n nginx-ingress

NAME READY STATUS RESTARTS AGE

ngx-ing-7c88558665-s7srp 1/1 Running 0 38s

# kubectl get deploy -n nginx-ingress

NAME READY UP-TO-DATE AVAILABLE AGE

ngx-ing 1/1 1 1 42s将ingress对应的pod端口映射到master上测试

# kubectl get pod -n nginx-ingress

NAME READY STATUS RESTARTS AGE

ngx-ing-7c88558665-s7srp 1/1 Running 0 4m6s

# kubectl port-forward -n nginx-ingress ngx-ing-7c88558665-s7srp 8888:80 &

[1] 32232

# Forwarding from 127.0.0.1:8888 -> 80

# netstat -lnp|grep 8888

tcp 0 0 127.0.0.1:8888 0.0.0.0:* LISTEN 32232/kubectl 测试

# curl -x127.0.0.1:8888 ikubernetes.cloud

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>三、搞懂Kubernetes调度

K8S调度器Kube-scheduler的主要作用是将新创建的Pod调度到集群中的合适节点运行。kube-scheduler的调度算法很灵活,可以根据不同的需求进行自定义设置,比如资源限制,亲和性和反亲和性测试等。

1)kube-scheduler的工作原理

- 监听API Server:kube-scheduler会监听API Server上的Pod对象,以获取需要被调度的Pod信息。它会通过API Server提供的REST API接口获取Pod的信息,例如Pod的标签、资源需求等信息。

- 筛选可用节点:kube-scheduler 会根据Pod的资源需求和约束条件(例如Pod需要的特定及诶单标签)筛选出可用的Node节点,它会从所有注册到集群的Node节点上选择符合条件的节点。

- 计算分值:kube-scheduler 会为每一个可用的节点计算一个分值,以决定哪个节点是最合适的。分值的计算方式可以通过调度算法来指定。

- 选择节点:kube-scheduler会选择分值最高的节点作为最终的调度目标,并将Pod绑定到改节点上。如果有多个节点得分相等,kube-scheduler会随机选择一个节点

- 更新API Server:kube-scheduler会更新API Server上的Pod对象,将指定的Node节点信息写入Pod对象的spec字段中,然后通知Kubelet将Pod绑定到改节点上并启动容器。

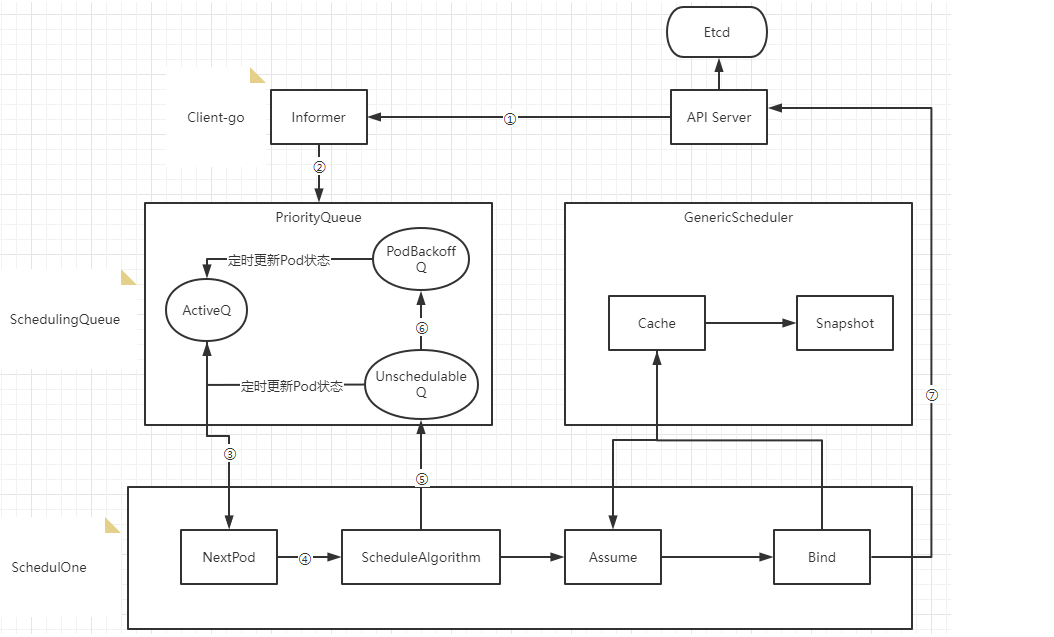

2)Kube-scheduler调度器内部流转过程

- Scheduler 通过注册client-go的informer handler 方法监听api-server的pod和node变更时间,获取pod和node信息缓存到informer中

- 通过informer的handler将时间更新到ActiveQ(ActiveQ是一个维护Pod有限级的堆结构,调度器在循环调度中每次从堆中取出优先级最高的Pod进行调度)

- 调度循环通过NextOid方法从ActiveQ中取出待调度对垒

- 使用调度算法针对Node和Pod进行匹配和打分确定调度目标节点

- 如歌调度器出错或失败,会调用shed。Error将Pod写入UnschedulableQ里

- 当不可调度时间超过backoff的时间,Pod会由Unschedulable转换到Podbackoff,也就是说Pod信息会写入到PodbackoffQ

- Client-go向Api Server发送一个bind请求,实现异步绑定

调度器在执行绑定的时候是一个一步过程,调度器会先在缓存中创建一个和原来Pod一样的Assume Pod对象用模拟完成节点的绑定,如将Assume Pod的Nodename设置成绑定节点名称,同时通过异步执行绑定指令操作。在Pod和Node绑定之前,Scheduler需要确保Volume已经完成绑定操作,确认完所有绑定前准备工作,Scheduler会向Api Server发送一个Bind对象,对应节点的Kubelet将待绑定的Pod在节点运行。

3 )为节点计算分值

节点分值计算是通过调度器算法实现,而不是固定的。默认情况下,kube-scheduler采用的是DefaultPreemption算法。期计算分值方式主要包括以下几个方面:

- 节点的资源利用率 kube-scheduler会考虑每个节点的CPU和内存资源利用率,将其纳入节点分值的计算中。资源利用率越低的节点得分越高。

- 节点上的Pod数目 kube-scheduler会考虑每个节点上已经存在的Pod数目,将其纳入节点分值的计算中。如果节点上已经有大量的Pod,新的Pod可能会导致资源竞争和拥堵,因此节点得分会相应降低。

- Pod与节点的亲和性和互斥性 kube-scheduler会考虑Pod与节点的亲和性和互斥性,将其纳入节点分值的计算中。如果Pod与节点存在亲和性,例如Pod需要特定的节点标签或节点与Pod在同一区域,节点得分会相应提高。如果Pod与节点存在互斥性,例如Pod不能与其他特定的Pod共存于同一节点,节点得分会相应降低。

- 节点之间的网络延迟 kube-scheduler会考虑节点之间的网络延迟,将其纳入节点分值的计算中。如果节点之间的网络延迟较低,节点得分会相应提高。

- Pod的优先级 kube-scheduler会考虑Pod的优先级,将其纳入节点分值的计算中。如果Pod具有高优先级,例如是关键业务的部分,节点得分会相应提高。

这些因素的相对权重可以通过kube-scheduler的命令行参数或者调整调度器配置文件进行调整。

4)调度策略

- 默认调度策略(DefaultPreemption):是kube-scheduler的默认策略,其基本原则是为Pod选择一个未满足需求的最小代价节点。如果无法找到这样的接点,就会考虑使用预选,即将一些已经调度的Pod驱逐出去来为新的Pod腾出空间。

- 带优先级的调度策略(Priority):带有限级的调度策略基于Pod的优先级对节点进行排序,优先选择优先级高得Pod。该策略可以通过设置Pod的PriorityClass来实现,

- 节点亲和性调度策略(NodeAffinity):节点亲和性调度策略基于节点标签或其他条件,选择与Pod需要的条件相匹配的节点。可以通过在Pod定义中石油NodeAffinity配置实现。

- Pod清河县调度策略(PodAffinity):Pod亲和性调度策略根据Pod的标签或其他条件,选择与Pod相似的其他Pod所在的节点,可以通过在Pod定义中使用PodAFFINITY配置实现。

- Pod互斥性调度策略(PodAntuAffinity):Pod互斥性调度策略选择与Pod不相似的其他Pod所在节点,以避免同一节点上运行相似的Pod。这可以通过Pod定义中石油PodAntiAffinity配置实现。

- 资源限制调度策略(ResourceLimits):资源限制调度策略选择可用资源最多的接地那,以满足Pod的资源需求。通过在Pod定义中使用ResourceLimits配置实现。

四、节点选择器NodeSelector

NodeSelector会将Pod根据定义的标签选定到匹配的Node上

NodeSelector示例

cat > nodeselector.yaml <<EOF

apiVersion: v1

kind: Pod

metadata:

name: nginx-ssd

spec:

containers:

- name: nginx-ssd

image: nginx:1.25.2

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

nodeSelector:

disktype: ssd

EOF应用YAML文件

# kubectl apply -f nodeselector.yaml

pod/nginx-ssd created查看Pod状态

# kubectl apply -f nodeselector.yaml

pod/nginx-ssd created

# kubectl get po nginx-ssd

NAME READY STATUS RESTARTS AGE

nginx-ssd 0/1 Pending 0 33skubectl describe pod nginx-ssd

# kubectl describe pod nginx-ssd

Name: nginx-ssd

Namespace: default

Priority: 0

Service Account: default

Node: <none>

Labels: <none>

Annotations: <none>

Status: Pending

IP:

IPs: <none>

Containers:

nginx-ssd:

Image: nginx:1.25.2

Port: 80/TCP

Host Port: 0/TCP

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-cl9lb (ro)

Conditions:

Type Status

PodScheduled False

Volumes:

kube-api-access-cl9lb:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional: <nil>

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: disktype=ssd

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 73s default-scheduler 0/4 nodes are available: 1 node(s) had untolerated taint {node-role.kubernetes.io/control-plane: }, 3 node(s) didn't match Pod's node affinity/selector. preemption: 0/4 nodes are available: 4 Preemption is not helpful for scheduling..给Node打标签

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master-1-230 Ready control-plane 24d v1.27.6

node-1-231 Ready <none> 24d v1.27.6

node-1-232 Ready <none> 24d v1.27.6

node-1-233 Ready <none> 18d v1.27.6

# kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

master-1-230 Ready control-plane 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=master-1-230,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

node-1-231 Ready <none> 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-231,kubernetes.io/os=linux

node-1-232 Ready <none> 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-232,kubernetes.io/os=linux

node-1-233 Ready <none> 18d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-233,kubernetes.io/os=linux

# kubectl label node node-1-231 disktype=ssd

node/node-1-231 labeled查看Node(node-1-231) label

# kubectl get node node-1-231 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node-1-231 Ready <none> 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-231,kubernetes.io查看Pod信息

# kubectl get po nginx-ssd

NAME READY STATUS RESTARTS AGE

nginx-ssd 1/1 Running 0 5m37s

# kubectl describe po nginx-ssd |grep -i node

Node: node-1-231/192.168.1.231

Node-Selectors: disktype=ssd

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Warning FailedScheduling 5m41s default-scheduler 0/4 nodes are available: 1 node(s) had untolerated taint {node-role.kubernetes.io/control-plane: }, 3 node(s) didn't match Pod's node affinity/selector. preemption: 0/4 nodes are available: 4 Preemption is not helpful for scheduling..

Normal Scheduled 2m7s default-scheduler Successfully assigned default/nginx-ssd to node-1-231五、节点亲和性NodeAffinity

目的是把Pod部署到符合要求的Node上。

关键词:

- requiredDuringSchedulingIgnoredDuringExecution:表示强匹配,必须要满足

- preferredDuringSchedulingIgnoredDuringExecution:表示弱匹配,尽可能满足,但不保证

示例:

apiVersion: v1

kind: Pod

metadata:

name: with-node-affinity

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: ##必须满足下面匹配规则

nodeSelectorTerms:

- matchExpressions:

- key: env

operator: In ##逻辑运算符支持:In,NotIn,Exists,DoesNotExist,Gt,Lt

values:

- test

- dev

preferredDuringSchedulingIgnoredDuringExecution: ##尽可能满足,但不保证

- weight: 1

preference:

matchExpressions:

- key: project

operator: In

values:

- aminglinux

containers:

- name: with-node-affinity

image: redis:6.0.6说明:

- 同时指定Node Selector和Node Affinity,两者必须同时满足

- Node Affinity 指定多个nodeSelectorTerms,只需要一组满足就可以

- 当在nodeSelectorTerms中有包含多组matchExpressions,必须全部满足

node-affinity演示示例

cat > nodeAffinity.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: node-affinity

spec:

containers:

- name: my-container

image: nginx:1.25.2

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: special-node

operator: Exists

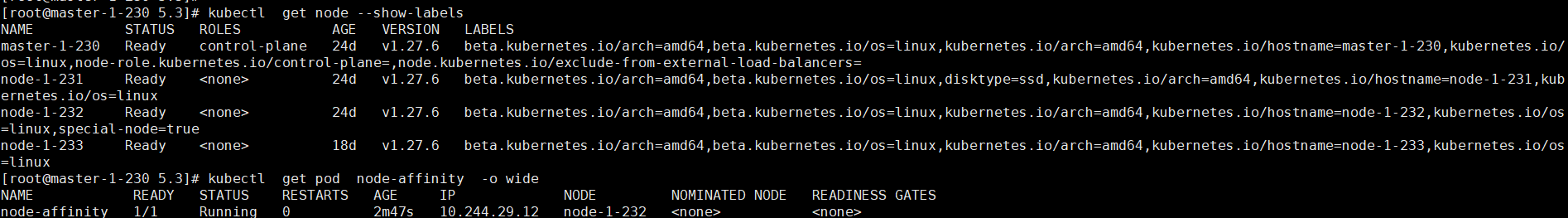

EOF给其中一个节点打标签

# kubectl label nodes node-1-232 special-node=true

node/node-1-232 labeled应用YAML文件

# kubectl apply -f nodeAffinity.yaml

pod/node-affinity created查看Pod所在node

# kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

master-1-230 Ready control-plane 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=master-1-230,kubernetes.io/oontrol-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

node-1-231 Ready <none> 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,disktype=ssd,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-231,kube

node-1-232 Ready <none> 24d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-232,kubernetes.io/os=

node-1-233 Ready <none> 18d v1.27.6 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node-1-233,kubernetes.io/os=

# kubectl get pod node-affinity -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

node-affinity 1/1 Running 0 117s 10.244.29.12 node-1-232 <none> <none>

- NodeSelector NodeAffinity Kubernetes ingress 405nodeselector nodeaffinity kubernetes ingress 节点nodeaffinity nodeselector nodename kubernetes ingress kubernetes dashboard ingress kubernetes ingress k8s k8 ingress-nginx controller-v kubernetes controller 蓝绿kubernetes ingress ingress-nginx容器kubernetes ingress 集群 路由kubernetes ingress 灰度ingress-nginx kubernetes ingress