背景

线上发现elasticsearch集群状态red,并且有个es节点jvm内存使用不断升高,直到gc后依然内存不够使用,服务停止。查看日志,elasticsearch出现OOM报错。

[2023-12-06T08:21:26,706][ERROR][o.e.b.ElasticsearchUncaughtExceptionHandler] [node-10.136.5.85] fatal error in thread [Thread-1243], exiting

java.lang.OutOfMemoryError: Java heap space

at io.netty.util.internal.PlatformDependent.allocateUninitializedArray(PlatformDependent.java:281) ~[?:?]

at io.netty.buffer.PoolArena$HeapArena.newByteArray(PoolArena.java:662) ~[?:?]

at io.netty.buffer.PoolArena$HeapArena.newChunk(PoolArena.java:672) ~[?:?]

at io.netty.buffer.PoolArena.allocateNormal(PoolArena.java:247) ~[?:?]

at io.netty.buffer.PoolArena.allocate(PoolArena.java:227) ~[?:?]

at io.netty.buffer.PoolArena.allocate(PoolArena.java:147) ~[?:?]

at io.netty.buffer.PooledByteBufAllocator.newHeapBuffer(PooledByteBufAllocator.java:339) ~[?:?]

at io.netty.buffer.AbstractByteBufAllocator.heapBuffer(AbstractByteBufAllocator.java:168) ~[?:?]

at io.netty.buffer.AbstractByteBufAllocator.heapBuffer(AbstractByteBufAllocator.java:159) ~[?:?]

at org.elasticsearch.transport.NettyAllocator$NoDirectBuffers.heapBuffer(NettyAllocator.java:137) ~[?:?]

at org.elasticsearch.transport.NettyAllocator$NoDirectBuffers.ioBuffer(NettyAllocator.java:122) ~[?:?]

at io.netty.channel.DefaultMaxMessagesRecvByteBufAllocator$MaxMessageHandle.allocate(DefaultMaxMessagesRecvByteBufAllocator.java:114) ~[?:?]

at io.netty.channel.nio.AbstractNioByteChannel$NioByteUnsafe.read(AbstractNioByteChannel.java:147) ~[?:?]

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:714) ~[?:?]

at io.netty.channel.nio.NioEventLoop.processSelectedKeysPlain(NioEventLoop.java:615) ~[?:?]

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:578) ~[?:?]

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:493) ~[?:?]

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:989) ~[?:?]

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74) ~[?:?]

at java.lang.Thread.run(Thread.java:832) [?:?]

[2023-12-06T08:21:26,707][WARN ][o.e.h.AbstractHttpServerTransport] [node-10.136.5.85] caught exception while handling client http traffic, closing connection Netty4HttpChannel{localAddress=/10.136.5.85:9200, remoteAddress=/10.136.5.71:49648}

java.lang.Exception: java.lang.OutOfMemoryError: Java heap space

at org.elasticsearch.http.netty4.Netty4HttpRequestHandler.exceptionCaught(Netty4HttpRequestHandler.java:69) [transport-netty4-client-7.8.1.jar:7.8.1]

at io.netty.channel.AbstractChannelHandlerContext.invokeExceptionCaught(AbstractChannelHandlerContext.java:302) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.AbstractChannelHandlerContext.invokeExceptionCaught(AbstractChannelHandlerContext.java:281) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.AbstractChannelHandlerContext.fireExceptionCaught(AbstractChannelHandlerContext.java:273) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.DefaultChannelPipeline$HeadContext.exceptionCaught(DefaultChannelPipeline.java:1377) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.AbstractChannelHandlerContext.invokeExceptionCaught(AbstractChannelHandlerContext.java:302) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.AbstractChannelHandlerContext.invokeExceptionCaught(AbstractChannelHandlerContext.java:281) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.DefaultChannelPipeline.fireExceptionCaught(DefaultChannelPipeline.java:907) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.nio.AbstractNioByteChannel$NioByteUnsafe.handleReadException(AbstractNioByteChannel.java:125) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.nio.AbstractNioByteChannel$NioByteUnsafe.read(AbstractNioByteChannel.java:174) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:714) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.nio.NioEventLoop.processSelectedKeysPlain(NioEventLoop.java:615) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:578) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:493) [netty-transport-4.1.49.Final.jar:4.1.49.Final]

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:989) [netty-common-4.1.49.Final.jar:4.1.49.Final]

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74) [netty-common-4.1.49.Final.jar:4.1.49.Final]

at java.lang.Thread.run(Thread.java:832) [?:?]

Caused by: java.lang.OutOfMemoryError: Java heap space

at io.netty.util.internal.PlatformDependent.allocateUninitializedArray(PlatformDependent.java:281) ~[?:?]

at io.netty.buffer.PoolArena$HeapArena.newByteArray(PoolArena.java:662) ~[?:?]

at io.netty.buffer.PoolArena$HeapArena.newChunk(PoolArena.java:672) ~[?:?]

at io.netty.buffer.PoolArena.allocateNormal(PoolArena.java:247) ~[?:?]

at io.netty.buffer.PoolArena.allocate(PoolArena.java:227) ~[?:?]

at io.netty.buffer.PoolArena.allocate(PoolArena.java:147) ~[?:?]

at io.netty.buffer.PooledByteBufAllocator.newHeapBuffer(PooledByteBufAllocator.java:339) ~[?:?]

at io.netty.buffer.AbstractByteBufAllocator.heapBuffer(AbstractByteBufAllocator.java:168) ~[?:?]

at io.netty.buffer.AbstractByteBufAllocator.heapBuffer(AbstractByteBufAllocator.java:159) ~[?:?]

at org.elasticsearch.transport.NettyAllocator$NoDirectBuffers.heapBuffer(NettyAllocator.java:137) ~[?:?]

at org.elasticsearch.transport.NettyAllocator$NoDirectBuffers.ioBuffer(NettyAllocator.java:122) ~[?:?]

at io.netty.channel.DefaultMaxMessagesRecvByteBufAllocator$MaxMessageHandle.allocate(DefaultMaxMessagesRecvByteBufAllocator.java:114) ~[?:?]

at io.netty.channel.nio.AbstractNioByteChannel$NioByteUnsafe.read(AbstractNioByteChannel.java:147) ~[?:?]

... 7 more

搜索之前的广大网友的经验,discuss论坛有和我这一模一样的报错,但是没有回答。相似的报错,GitHub上有一个issue,但是已经在7.4版本解决了。我的版本号是7.8.1,说明问题不是一个问题。

先排查机器内存是否不够用,发现不是,是jvm.options配置的-Xms和-Xmx内存不够用,尝试配置到机器内存一半,配置30G,观察,依然出现同样的问题。

排查报错日志,发现是netty的报错,怀疑是写入出现问题。

Dump一下内存,分析下是什么占用了这么多的内存。

并发写入,用到了ScheduledThreadPool ,创建了大量线程 gc无法回收线程中内存导致内存不够用了。

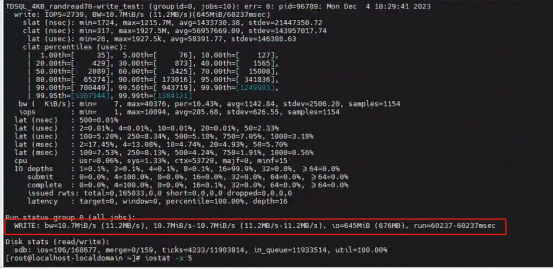

既然是写入问题,那测试下硬盘问题,使用fio测试硬盘随机读写

使用iostat -xdm 10 查看实时的硬盘读写,大概3M/s 磁盘随机读写都有10M/s了,说明不是磁盘的问题。

查看网络问题,ping有问题的机器,发现了端倪,只有他又慢,延迟又高,其它都比较低。

使用iperf检测网络问题。还没检查,得知客户给这台机器插得网口是百兆网口,其它机器都是千兆网口。

结合elasticsearch 集群Transport业务逻辑,分片平均分配到所有机器上,每台机器都接收写入的请求。整个集群的网络分发数据有木桶效应,一个网口慢会让整个集群都慢。(如果没有特别指定分片所处的节点)

结论

出现上述报错,优先排查集群网络问题,查看数据的写入量是否超过了网口速率的上限。改成千兆网口即可