首先是四项配置

core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>billsaifu:2181,hadoop1:2181,hadoop2:2181</value>

</property>

<!-- <property>-->

<!-- <name>fs.defaultFS</name>-->

<!-- <value>hdfs://billsaifu:9000</value>-->

<!-- </property>-->

<property>

<name>hadoop.http.staticuser.user</name>

<value>bill</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop-3.2.4/tmp</value>

</property>

<property>

<name>dfs.datanode.max.xcievers</name>

<value>4096</value>

<dedication> Datanode 有一个同时处理文件的上限,至少要有4096</dedication>

</property>

<property>

<name>hadoop.proxyuser.bill.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.bill.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.bill.users</name>

<value>*</value>

</property>

</configuration>hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.replication.min</name>

<value>1</value>

</property>

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>billsaifu:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>billsaifu:9870</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>hadoop2:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>hadoop2:9870</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://billsaifu:8485;hadoop1:8485;hadoop2:8485/mycluster</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/mnt/hadoop_data/journal_data</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>

sshfence

</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/bill/.ssh/id_rsa</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.connect-timeout</name>

<value>30000</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/mnt/hadoop_data/hadoop_nndata</value>

</property>

<property>

<name>dfs.namenode.handler.count</name>

<value>100</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/mnt/hadoop_data/hdfs_dndata</value>

</property>

<property>

<name>dfs.permissions.superusergroup</name>

<value>freedom</value>

</property>

<property>

<name>dfs.client.use.datanode.hostname</name>

<value>true</value>

</property>

<property>

<name>dfs.datanode.use.datanode.hostname</name>

<value>true</value>

</property>

<property>

<name>dfs.namenode.clusterID</name>

<value>hadoopMaster</value>

</property>

<!-- <!– nn web 端访问地址–>-->

<!-- <property>-->

<!-- <name>dfs.namenode.http-address</name>-->

<!-- <value>billsaifu:9870</value>-->

<!-- </property>-->

<!-- <!– 2nn web 端访问地址–>-->

<!-- <property>-->

<!-- <name>dfs.namenode.secondary.http-address</name>-->

<!-- <value>hadoop2:9868</value>-->

<!-- </property>-->

</configuration>mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.map.memory.mb</name>

<value>2048</value>

</property>

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx1096M</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>2048</value>

</property>

<property>

<name>mapreduce.reduce.java.opts</name>

<value>-Xmx2560M</value>

</property>

<property>

<name>mapreduce.task.io.sort.mb</name>

<value>512</value>

</property>

<property>

<name>mapreduce.task.io.sort.factor</name>

<value>100</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>hdfs://billsaifu:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>http://billsaifu:19888</value>

</property>

</configuration>yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_HOME,PATH,LANG,TZ,HADOOP_MAPRED_HOME</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/mnt/hadoop_data/yarn_nmdata</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.scheduler.capacity.root.QUEUE_CRA.capacity</name>

<value>50</value>

</property>

<property>

<name>yarn.scheduler.capacity.root.QUEUE_CRA.maximum-capacity</name>

<value>100</value>

</property>

<property>

<name>yarn.scheduler.capacity.root.QUEUE_CRA.user-limit-factor</name>

<value>1</value>

</property>

<property>

<name>yarn.scheduler.capacity.root.QUEUE_CRA.state</name>

<value>RUNNING</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log.server.url</name>

<value>http://billsaifu:19889/jobhistory/logs</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>yrc</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>hadoop1</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>hadoop2</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>billsaifu:2181,hadoop1:2181,hadoop2:2181</value>

</property>

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

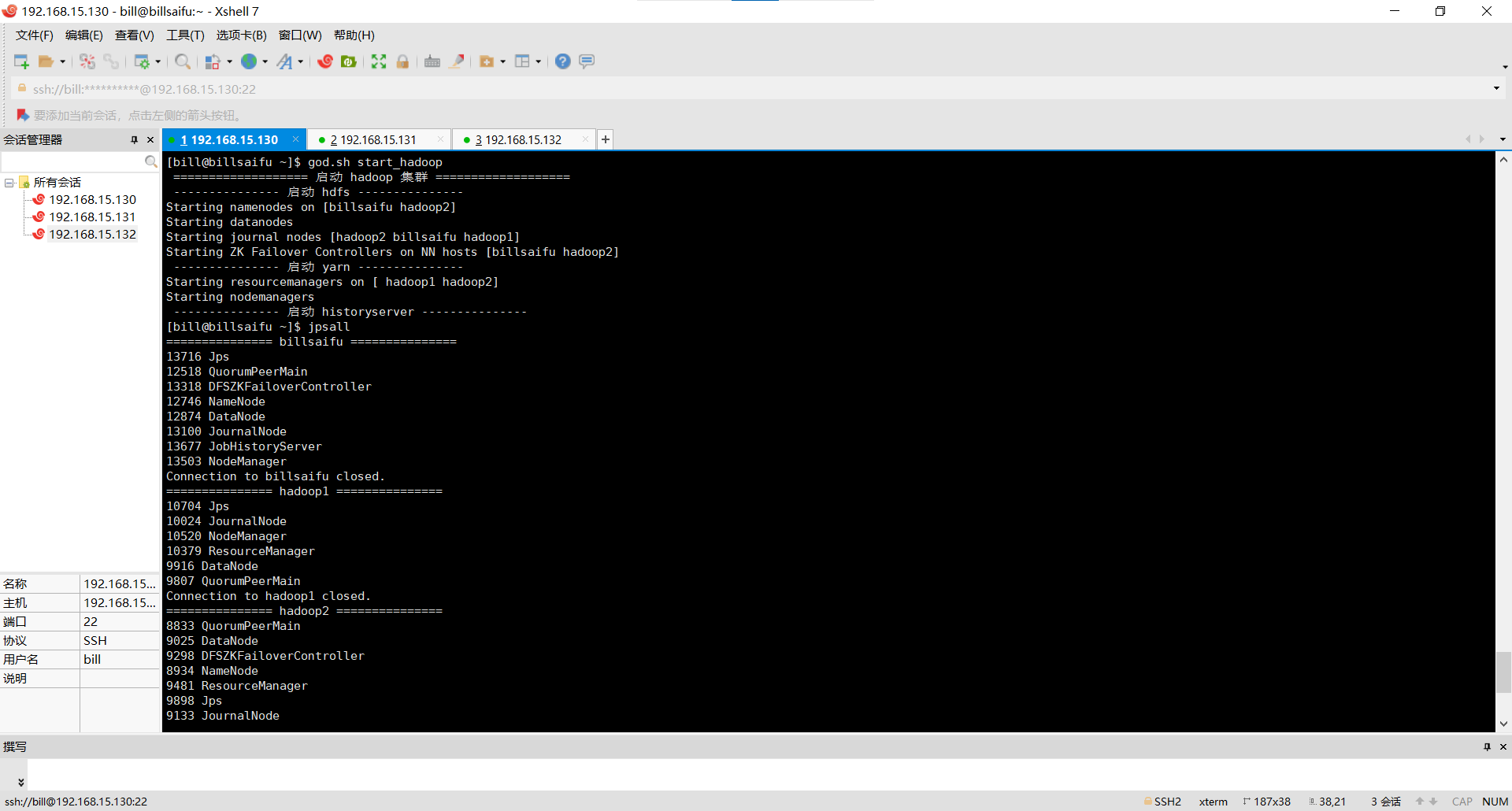

</configuration>启动journalnode

billsaifu:hadoop-daemon.sh start journalnode

hadoop1:hadoop-daemon.sh start journalnode

hadoop2:hadoop-daemon.sh start journalnode

格式化namenode

hadoop namenode -format

hadoop2上同步billsaifu上的节点的数据

hdfs namenode -bootstrapStandBy

初始化zookeeper

hdfs zkfc -formatZK

start-all.sh启动集群

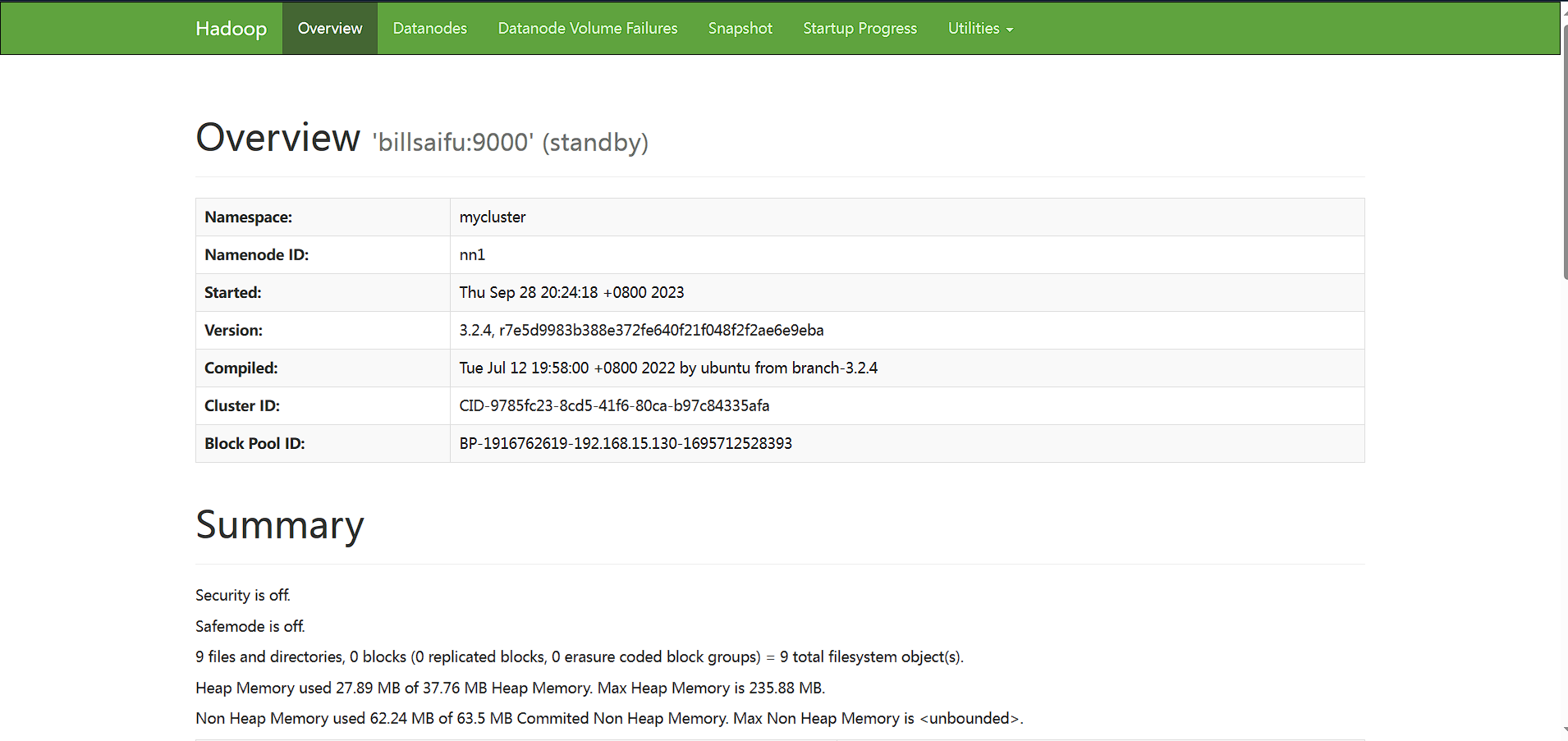

还有一件事情需要注意的是

因为之前我搭建了传统的hadoop集群因此当我初始化namenode后,datanode中的namenode是原来的那个版本需要修改过来不然无法启动datanode

进入hadoop_nndate下的VERSION

复制这个ID

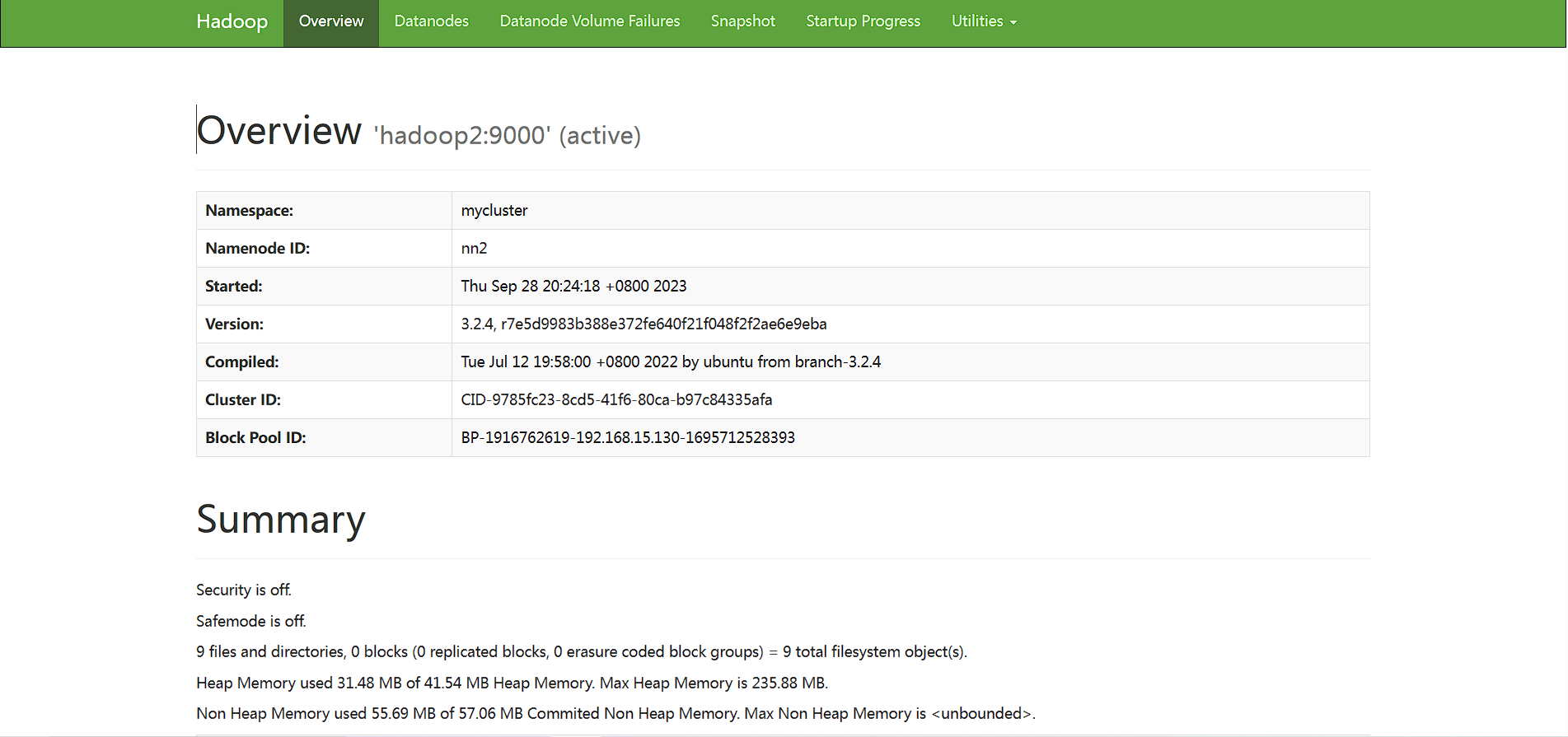

clusterID=CID-9785fc23-8cd5-41f6-80ca-b97c84335afa替换datanode的VESION的ID