一、Kubernetes集群的备份和还原

1.1 获取etcdctl二进制文件

查看etcd版本号

# kubectl -n kube-system exec -it $(kubectl get po -n kube-system |grep etcd- |head -1|awk '{print $1}') -- etcd --version

etcd Version: 3.5.7

Git SHA: 215b53cf3

Go Version: go1.17.13

Go OS/Arch: linux/amd64从github下载etcd 3.5.7 版本包文件

wget https://github.com/etcd-io/etcd/releases/download/v3.5.7/etcd-v3.5.7-linux-amd64.tar.gz解压etcd-v3.5.7-linux-amd64.tar.gz

tar zxf etcd-v3.5.7-linux-amd64.tar.gz -C /opt/将可执行文件软链接到/bin/ 目录

ln -s /opt/etcd-v3.5.7-linux-amd64/etcdctl /bin/1.2在master-1-230 节点备份

[root@master-1-230 opt]# mkdir -p /opt/etcd_backup/

您在 /var/spool/mail/root 中有新邮件

[root@master-1-230 opt]# ETCDCTL_API=3 etcdctl \

> snapshot save /opt/etcd_backup/snap-etcd-$(date +%F-%H-%M-%S).db \

> --endpoints=https://192.168.1.230:2379 \

> --cacert=/etc/kubernetes/pki/etcd/ca.crt \

> --cert=/etc/kubernetes/pki/etcd/server.crt \

> --key=/etc/kubernetes/pki/etcd/server.key

{"level":"info","ts":"2023-11-05T11:47:50.405+0800","caller":"snapshot/v3_snapshot.go:65","msg":"created temporary db file","path":"/opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db.part"}

{"level":"info","ts":"2023-11-05T11:47:50.412+0800","logger":"client","caller":"v3@v3.5.7/maintenance.go:212","msg":"opened snapshot stream; downloading"}

{"level":"info","ts":"2023-11-05T11:47:50.412+0800","caller":"snapshot/v3_snapshot.go:73","msg":"fetching snapshot","endpoint":"https://192.168.1.230:2379"}

{"level":"info","ts":"2023-11-05T11:47:50.460+0800","logger":"client","caller":"v3@v3.5.7/maintenance.go:220","msg":"completed snapshot read; closing"}

{"level":"info","ts":"2023-11-05T11:47:50.552+0800","caller":"snapshot/v3_snapshot.go:88","msg":"fetched snapshot","endpoint":"https://192.168.1.230:2379","size":"5.0 MB","took":"now"}

{"level":"info","ts":"2023-11-05T11:47:50.552+0800","caller":"snapshot/v3_snapshot.go:97","msg":"saved","path":"/opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db"}

Snapshot saved at /opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db1.3 恢复(单节点etcd)

停止kube-apiserver和etcd Pod

mv /etc/kubernetes/manifests/ /etc/kubernetes/manifests_bak ##将目录改名,就会自动停掉mv /var/lib/etcd/ /var/lib/etcd_bakETCDCTL_API=3 /opt/etcd-v3.5.7-linux-amd64/etcdutl snapshot restore /opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db --data-dir=/var/lib/etcd ##/var/lib/etcd/目录会自动生成启动kube-apiserver和etcd Pod

mv /etc/kubernetes/manifests_bak /etc/kubernetes/manifests #目录挪回去,服务会自动起来查看删除的Pod

kubectl get po1.4 恢复(etcd集群)

将master-1-230备份的etcd数据和etcdctl可执行文件copy到另外2个节点

[root@master-1-230 etcd_backup]# scp /opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db master-1-234:/tmp/

snap-etcd-2023-11-05-11-47-50.db 100% 4896KB 52.1MB/s 00:00

您在 /var/spool/mail/root 中有新邮件

[root@master-1-230 etcd_backup]# scp /opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db master-1-235:/tmp/

snap-etcd-2023-11-05-11-47-50.db

# scp -r /opt/etcd-v3.5.7-linux-amd64/ master-1-234:/opt/

The authenticity of host 'master-1-234 (192.168.1.234)' can't be established.

ECDSA key fingerprint is SHA256:JF34KxGa2jCFAjdEQnmCxD6Ff5Ywbl9ieLAOQUdhwAE.

ECDSA key fingerprint is MD5:5e:09:1e:13:72:5c:71:82:c8:9e:93:c9:e2:63:67:7a.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'master-1-234' (ECDSA) to the list of known hosts.

README.md 100% 9394 5.8MB/s 00:00

READMEv2-etcdctl.md 100% 7896 4.6MB/s 00:00

etcdutl 100% 13MB 73.7MB/s 00:00

etcdctl 100% 16MB 63.2MB/s 00:00

README.md 100% 532 533.7KB/s 00:00

v3election.swagger.json 100% 12KB 5.3MB/s 00:00

rpc.swagger.json 100% 91KB 39.8MB/s 00:00

v3lock.swagger.json 100% 5670 4.1MB/s 00:00

README-etcdutl.md 100% 7359 7.7MB/s 00:00

README-etcdctl.md 100% 41KB 26.1MB/s 00:00

etcd 100% 22MB 36.8MB/s 00:00

[root@master-1-230 etcd_backup]# scp -r /opt/etcd-v3.5.7-linux-amd64/ master-1-235:/opt/

The authenticity of host 'master-1-235 (192.168.1.235)' can't be established.

ECDSA key fingerprint is SHA256:rNDJIissGX1rU+raciQMvD2I2CzjY3w2KvErxzKDfCA.

ECDSA key fingerprint is MD5:51:42:52:c1:64:fa:4f:80:fe:d9:00:74:dc:10:be:ed.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'master-1-235' (ECDSA) to the list of known hosts.

README.md 100% 9394 5.7MB/s 00:00

READMEv2-etcdctl.md 100% 7896 5.0MB/s 00:00

etcdutl 100% 13MB 74.7MB/s 00:00

etcdctl 100% 16MB 69.4MB/s 00:00

README.md 100% 532 447.7KB/s 00:00

v3election.swagger.json 100% 12KB 8.6MB/s 00:00

rpc.swagger.json 100% 91KB 36.1MB/s 00:00

v3lock.swagger.json 100% 5670 3.4MB/s 00:00

README-etcdutl.md 100% 7359 6.4MB/s 00:00

README-etcdctl.md 100% 41KB 21.6MB/s 00:00

etcd 100% 22MB 63.3MB/s 00:00

[root@master-1-230 etcd_backup]# 在部署etcd节点停掉kube-apiserver和etcd Pod

mv /etc/kubernetes/manifests/ /etc/kubernetes/manifests_bak ##将目录改名,就会自动停掉mv /var/lib/etcd/ /var/lib/etcd_bak在etcd 3个节点分别恢复数据

master-1-230上恢复etcd数据

ETCDCTL_API=3 /opt/etcd-v3.5.7-linux-amd64/etcdutl snapshot restore /opt/etcd_backup/snap-etcd-2023-11-05-11-47-50.db --data-dir=/var/lib/etcd --name master-1-230 --initial-cluster="master-1-230=https://192.168.1.230:2380,master-1-235=https://192.168.1.235:2380,master-1-234=https://192.168.1.234:2380

--initial-advertise-peer-urls="https://192.168.1.230:2380"master-1-234上恢复etcd数据

ETCDCTL_API=3 /opt/etcd-v3.5.7-linux-amd64/etcdutl snapshot restore /tmp/snap-etcd-2023-11-05-11-47-50.db --data-dir=/var/lib/etcd --name master-1-234 --initial-cluster="master-1-230=https://192.168.1.230:2380,master-1-235=https://192.168.1.235:2380,master-1-234=https://192.168.1.234:2380

--initial-advertise-peer-urls="https://192.168.1.234:2380"master-1-235上恢复etcd数据

ETCDCTL_API=3 /opt/etcd-v3.5.7-linux-amd64/etcdutl snapshot restore /tmp/snap-etcd-2023-11-05-11-47-50.db --data-dir=/var/lib/etcd --name master-1-235 --initial-cluster="master-1-230=https://192.168.1.230:2380,master-1-235=https://192.168.1.235:2380,master-1-234=https://192.168.1.234:2380

--initial-advertise-peer-urls="https://192.168.1.235:2380"在部署etcd节点启动kube-apiserver和etcd Pod

mv /etc/kubernetes/manifests_bak /etc/kubernetes/manifests #目录挪回去,服务会自动起来二、 Kubernetes集群优化

1)官方标准如下:

-

不超过 5000 个节点

-

不超过 150000 个 pod

-

不超过 300000 个容器

-

每个节点不超过 100 个 pod

2)Master节点配置优化

|

工作节点数

|

CPU配置

|

内存配置

|

|

1-5

|

1核

|

3.75G

|

|

6-10

|

2核

|

7.5G

|

|

11-100

|

4核

|

15G

|

|

101-250

|

8核

|

30G

|

|

251-500

|

16核

|

60G

|

|

500以上

|

32核

|

120G

|

3)kube-apiserver 优化

高可用

启动多个kube-apiserver实例通过外部LB做负载均衡,而LB本身也需要做高可用,所以我们用的是Keepalived+Haproxy模式。控制连接数

在Kubernetes中,"突变请求"(Mutating Requests)是指对资源进行更改的请求,例如创建、更新或删除资源。这些请求会修改集群中的状态或配置。

相对地,"非突变请求"(Non-Mutating Requests)是指不会对资源进行更改的请求,通常是读取或观察资源的请求,例如获取资源的信息或列表。

Kubernetes API Server(apiserver)区分这两种类型的请求,以便能够采取不同的处理策略和并发限制。因为突变请求对集群状态产生更直接的影响,所以通常需要更加谨慎和有限制的处理。-

--max-mutating-requests-inflight : 用于限制并发进行的突变请求的数量。 确保在任何给定时间点上并发进行的突变请求数量不超过设置的限制。这可以帮助防止对apiserver的过度负载,防止资源竞争和故障。如果设置为0表示不限制,默认值为 200。

-

--max-requests-inflight : 用于限制非突变请求的最大并发数。 如果设置为0表示不限制,默认值为 400。

-

节点数量在 1000 - 3000 之间时,推荐:

--max-requests-inflight=1500 --max-mutating-requests-inflight=500

-

节点数量大于 3000 时,推荐:

--max-requests-inflight=3000 --max-mutating-requests-inflight=1000

4)kube-scheduler与kube-controller-manager优化

高可用

kube-controller-manager 和 kube-scheduler 是通过 leader election 实现高可用,启用时需要添加以下参数:

--leader-elect=true

--leader-elect-lease-duration=15s

--leader-elect-renew-deadline=10s

--leader-elect-resource-lock=endpoints

--leader-elect-retry-period=2s

按照我们在前面高可用部署方法部署就自动增加了--leader-elect=true

该参数需要修改/etc/kubernetes/manifests/kube-controller-manager.yaml和/etc/kubernetes/manifests/kube-scheduler.yaml控制 QPS(Controller-manager)

与 kube-apiserver 通信的 qps 限制,推荐为:

--kube-api-qps=100

控制burst(Controller-manager)

--kube-api-burst 是 Kubernetes API Server(kube-apiserver)的一个参数,用于控制 API 请求的突发请求数。

该参数定义了 kube-apiserver 在短时间内可以处理的请求数量上限,用于应对突发的请求流量。突发请求数是在超过 --kube-api-qps 参数定义的每秒请求数限制时允许的短期爆发请求的数量。

例如,--kube-api-burst=200 表示在 --kube-api-qps 所定义的每秒请求限制之上,kube-apiserver 可以接受最多 200 个突发请求。推荐为:

--kube-api-burst=200

以上两个参数需要修改 /etc/kubernetes/manifests/kube-controller-manager.yaml5)Kubelet 优化

-

设置 --image-pull-progress-deadline=30m: 该参数定义了这个拉取过程的超时时间。如果在超时时间内未完成镜像的拉取操作,kubelet 将终止该操作并报告失败。

-

设置 --serialize-image-pulls=false:该参数用于控制容器镜像的并发拉取行为,当设置为 true 时,kubelet 将以串行方式拉取容器镜像。这意味着每个容器将按顺序进行镜像拉取,一个接一个地执行。所以,这里设置为false,可以做到并发拉取镜像。

-

设置--max-pods=110:Kubelet 单节点允许运行的最大 Pod 数,默认是 110,可以根据实际需要设置。

6)Etcd优化

最好是单独拆分出来,部署成一个集群(可以使用kubeadm部署),然后在初始化时再指定外部Etcd集群即可对于Etcd使用的磁盘最好是使用SSD盘,提高磁盘的IO性能。由于 ETCD 必须将数据持久保存到磁盘日志文件中,因此来自其他进程的磁盘活动可能会导致增加写入时间,结果导致 ETCD 请求超时和临时 leader 丢失。当给定高磁盘优先级时,ETCD 服务可以稳定地与这些进程一起运行:

ionice -c2 -n0 -p $(pgrep etcd)提高存储配额

默认 ETCD 空间配额大小为 2G,超过 2G 将不再写入数据。通过给 ETCD 配置 --quota-backend-bytes 参数增大空间配额,最大支持 8G。

vi /etc/kubernetes/manifests/etcd.yaml 增加参数分离 events 存储

--etcd-servers="http://etcd1:2379,http://etcd2:2379,http://etcd3:2379" --etcd-servers-overrides="/events#http://etcd4:2379,http://etcd5:2379,http://etcd6:2379"三、 全链路监控Skywalking介绍

3.1 APM 与传统监控

- 如何串联整个调用链路,快速定位问题?如:应用与第三方服务之间的数据流向,应用与应用之间的调用。

- 如何缕清各个微服务之间的依赖关系?如:应用A调用应用B,应用B又会调用应用C

- 如何进行各个微服务接口的性能分析?

- 如何跟踪整个业务流程的调用处理顺序?

使用APM工具,比如Skywalking 可以快速自动显示业务调用关系

3.2 Skywalking介绍

Skywalking 是一个国产开源框架。Skywalking是分布式系统的应用程序性能监控工具,专为微服务、云原生架构和基于容器(Docker、k8s)架构设计,

Github: https://github.com/apache/skywalking

官方文档:https://skywalking.apache.org/docs/

- 服务(Service):表示对请求提供相同行为的一组工作负载,在使用打点代理或 SDK 的时候,你可以定义服务的名字. SkyWalking 还可以使用在 Istio 等平台中定义的名称。

- 服务实例(Service Instance):上述的一组工作负载中的每一个工作负载称为一个实例,就像 Kubernetes 中的 pods 一样,服务实例未必就是操作系统上的一个进程. 但当你在使用打点代理的时候, 一个服务实例实际就是操作系统上的一个真实进程.

- 端点(Endpoint):对于特定服务所接收的请求路径, 如 HTTP 的 URI 路径和 gRPC 服务的类名 + 方法签名。

使用SkWalking时,用户可以看到服务与端点之间的拓扑结果,每个服务/服务实例/端点的性能指标,可以设置报警规则。

- 探针:基于不同的来源可能不一样,但左右都是搜集数据,将数据格式转化为Skywalking适用的格式。

- 平台后端:支持数据聚合,数据分析以及驱动数据流从探针到用户节点的流程。分析包括skywalking原生追踪和性能指标以及第三方来源。

- 存储:通过开放的插件化接口存放skywalking数据。可以选择存储系统如ElasticSearch或Mysql集群。

- 用户界面(UI):一个基于接口高度定制化 的web系统,用户可以可视化查看和管理skywalking数据

3)探针

探针主要基础到目标系统中的代理或SDK库,它负责手机遥测数据,包括链路追踪和性能目标。根据目标系统的技术栈,探针可能有差异巨大的方式达到以上功能。但从根本上是一致的,即收集并格式化数据,发送到后端。

skywalking 探针可分为三组:

- 基于语言的原生代理,这种类型的代理运行在目标服务的用户空间中,就行用户代码的一部分。如skywalking java代理,使用-javaagent命令参数在运行期间对代码进行操作。另一种是使用目标库提供的钩子函数或拦截机制。这些探针是基于特定的语言和库。

- 服务网络探针,服务网络探针从服务网格的sidecar和控制面板搜集数据,在以前代理只作用整个集群的入口,但是有了服务网格和sidecar之后,可基于此进行观测。

- 第三方打点类库,skywalking能接收其他流行的打点库产生的数据格式。skywalking通过分析数据,将数据格式化成自身的链路和度量数据格式。

四、 Skywalking部署

4.1 版本信息

Skywalking 9.5.0

Elasticsearch 8.10.4

4.2 安装ElasticSearch

4.2.1查看bitnami仓库是否加载

# helm repo list|grep bitnami

bitnami https://charts.bitnami.com/bitnami helm repo add bitnami https://charts.bitnami.com/bitnami

helm repo update4.2.2 下载ElasticSearch的chart包

# helm search repo elasticsearch|grep bitnami

bitnami/elasticsearch 19.13.5 8.10.4 Elasticsearch is a distributed search and analy...

bitnami/dataplatform-bp2 12.0.5 1.0.1 DEPRECATED This Helm chart can be used for the ...

bitnami/kibana 10.5.10 8.10.4 Kibana is an open source, browser based analyti...

# helm pull bitnami/elasticsearch --untar --version 19.13.54.2.3 安装ElasticSearch

cd elasticsearch

vi values.yaml

定义 storageClass: "nfs-client"

搜索 memory: 2048Mi 改为 memory: 1024Mi

搜索 heapSize: 1024m 改为 heapSize: 512m

搜索 replicaCount: 2 改为 replicaCount: 1 ##如果是生产环境不要改# helm install skywalking-es .

NAME: skywalking-es

LAST DEPLOYED: Sun Nov 5 19:55:37 2023

NAMESPACE: default

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

CHART NAME: elasticsearch

CHART VERSION: 19.13.5

APP VERSION: 8.10.4

-------------------------------------------------------------------------------

WARNING

Elasticsearch requires some changes in the kernel of the host machine to

work as expected. If those values are not set in the underlying operating

system, the ES containers fail to boot with ERROR messages.

More information about these requirements can be found in the links below:

https://www.elastic.co/guide/en/elasticsearch/reference/current/file-descriptors.html

https://www.elastic.co/guide/en/elasticsearch/reference/current/vm-max-map-count.html

This chart uses a privileged initContainer to change those settings in the Kernel

by running: sysctl -w vm.max_map_count=262144 && sysctl -w fs.file-max=65536

** Please be patient while the chart is being deployed **

Elasticsearch can be accessed within the cluster on port 9200 at skywalking-es-elasticsearch.default.svc.cluster.local

To access from outside the cluster execute the following commands:

kubectl port-forward --namespace default svc/skywalking-es-elasticsearch 9200:9200 &

curl http://127.0.0.1:9200/# kubectl get po |grep es-

kubectl get svc|grep es-

curl http://10.109.107.49:9200

4.3 安装Skywalking

4.3.1 添加repo仓库

# helm repo add skywalking https://apache.jfrog.io/artifactory/skywalking-helm

"skywalking" has been added to your repositories

[root@master-1-230 elasticsearch]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "myharbor" chart repository

...Successfully got an update from the "aliyun" chart repository

...Successfully got an update from the "skywalking" chart repository

...Successfully got an update from the "bitnami" chart repository

...Successfully got an update from the "prometheus-community" chart repository

Update Complete. ⎈Happy Helming!⎈4.3.2 下载chart

# helm search repo skywalking

NAME CHART VERSION APP VERSION DESCRIPTION

skywalking/skywalking 4.3.0 Apache SkyWalking APM System

helm pull skywalking/skywalking --version 4.3.04.3.3 修改value.yaml

tar zxvf skywalking-4.3.0.tgz

cd skywalking

vi values.yaml #有几个地方需要改

elasticsearch:

config:

host: skywalking-es-elasticsearch.default ##查看svc可以查到,后面加default,表示default命名空间

port:

http: 9200

enabled: false ##把true改为false,意思是不自动安装es,因为我们前面已经手动安装过了

oap:

image:

tag: 9.5.0

javaOpts: -Xmx512m -Xms512m ##内存减少,如果是生产环境,可以适当调大

replicas: 1 ##把2改为1,降低资源使用,生产环境不要改为1

storageType: elasticsearch ##使用es作为存储

ui:

image:

tag: 9.5.04.3.4 安装

# helm install skywalking .

NAME: skywalking

LAST DEPLOYED: Sun Nov 5 20:12:52 2023

NAMESPACE: default

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

************************************************************************

* *

* SkyWalking Helm Chart by SkyWalking Team *

* *

************************************************************************

Thank you for installing skywalking.

Your release is named skywalking.

Learn more, please visit https://skywalking.apache.org/

Get the UI URL by running these commands:

echo "Visit http://127.0.0.1:8080 to use your application"

kubectl port-forward svc/skywalking-ui 8080:80 --namespace default4.3.5 端口映射,将ingress80 端口转发到8083

nohup kubectl port-forward svc/skywalking-ui --address 192.168.1.230 8083:80 --namespace default &

# netstat -lnp|grep 8083

tcp 0 0 192.168.1.230:8083 0.0.0.0:* LISTEN 59557/kubectl nohup kubectl port-forward svc/skywalking-oap --address 192.168.1.230 11800:11800 &4.3.6 访问ui

http://192.168.1.230:8083/ 4.3.7 查看es数据

4.3.7 查看es数据

kubectl get svc|grep es

curl http://10.109.107.49:9200/_cat/indices?v

五、Skywalking配置和使用

5.1配置一个java应用

5.1.1 在 master-1-230 节点安装docker

安装docker

curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun[root@master-1-230 13.3]# systemctl restart docker

[root@master-1-230 13.3]# systemctl enable docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

[root@master-1-230 13.3]# docker version

Client: Docker Engine - Community

Version: 24.0.7

API version: 1.43

Go version: go1.20.10

Git commit: afdd53b

Built: Thu Oct 26 09:11:35 2023

OS/Arch: linux/amd64

Context: default

Server: Docker Engine - Community

Engine:

Version: 24.0.7

API version: 1.43 (minimum version 1.12)

Go version: go1.20.10

Git commit: 311b9ff

Built: Thu Oct 26 09:10:36 2023

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.6.24

GitCommit: 61f9fd88f79f081d64d6fa3bb1a0dc71ec870523

runc:

Version: 1.1.9

GitCommit: v1.1.9-0-gccaecfc

docker-init:

Version: 0.19.0

GitCommit: de40ad05.1.2 安装git

yum install -y git5.1.3 克隆zrlog源码

git clone https://gitee.com/94fzb/zrlog-docker.git

正克隆到 'zrlog-docker'...

remote: Enumerating objects: 82, done.

remote: Counting objects: 100% (3/3), done.

remote: Compressing objects: 100% (3/3), done.

remote: Total 82 (delta 0), reused 0 (delta 0), pack-reused 79

Unpacking objects: 100% (82/82), done.5.1.4 编译

cd zrlog-docker

##下载Skywalking的java agent

which wget &> /dev/null || yum install -y wget

wget https://archive.apache.org/dist/skywalking/java-agent/8.15.0/apache-skywalking-java-agent-8.15.0.tgz

tar zxvf apache-skywalking-java-agent-8.15.0.tgz

##将刚刚解压的包,用zip压缩

which zip &>/dev/null || yum install -y zip

zip -r java-agent.zip skywalking-agent

##编辑zrlog包里的启动脚本run.sh,目的是为了增加skywalking的java agent

which unzip &>/dev/null || yum install -y unzip

wget http://dl.zrlog.com/release/zrlog.zip

mkdir zrlog

unzip zrlog.zip -d zrlog/

vi zrlog/bin/run.sh ##内容改为如下

java -javaagent:/opt/tomcat/skywalking-agent/skywalking-agent.jar -Dskywalking.agent.service_name=app1 -Dskywalking.collector.backend_service=192.168.1.230:11800 -Xmx128m -Dfile.encoding=UTF-8 -jar zrlog-starter.jar#说明:

#javaagent:指定skywalking-agent.jar文件路径

#skywalking.agent.service_name: 本应用在skywalking中的名称

#skywalking.collector.backend_service: skywalking 服务端地址,grpc上报地址,默认端口是 11800

##将zrlog重新打包

cd zrlog

zip -r zrlog.zip ./*

cd ..

/bin/mv zrlog/zrlog.zip ./

##修改Dockerfile

vi Dockerfile #改为如下

FROM openjdk:17

MAINTAINER “xiaochun” xchun90@163.com

CMD [“/bin/bash”]

ARG DUMMY

RUN mkdir -p /opt/tomcat

#RUN curl -o /opt/tomcat/ROOT.zip http://dl.zrlog.com/release/zrlog.zip?${DUMMY}

COPY zrlog.zip /opt/tomcat/ROOT.zip

RUN cd /opt/tomcat && jar -xf ROOT.zip

ADD /java-agent.zip /opt/tomcat/java-agent.zip

RUN cd /opt/tomcat && jar -xf java-agent.zip

ADD /bin/run.sh /run.sh

RUN chmod a+x /run.sh

#RUN rm /opt/tomcat/ROOT.zip

CMD /run.sh

##到此结束

##编译成镜像

sh build.sh

5.1.5 安装zrlog应用

使用docker 启动容器

# docker run -itd -p 28080:8080 -e DOCKER_MODE='true' zrlog /run.sh

eee130a11c58faccdae4ba4bba49f249e0b41069ca56d14c92be2998dce4dcd1访问,首先查看zrlog对外映射端口

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

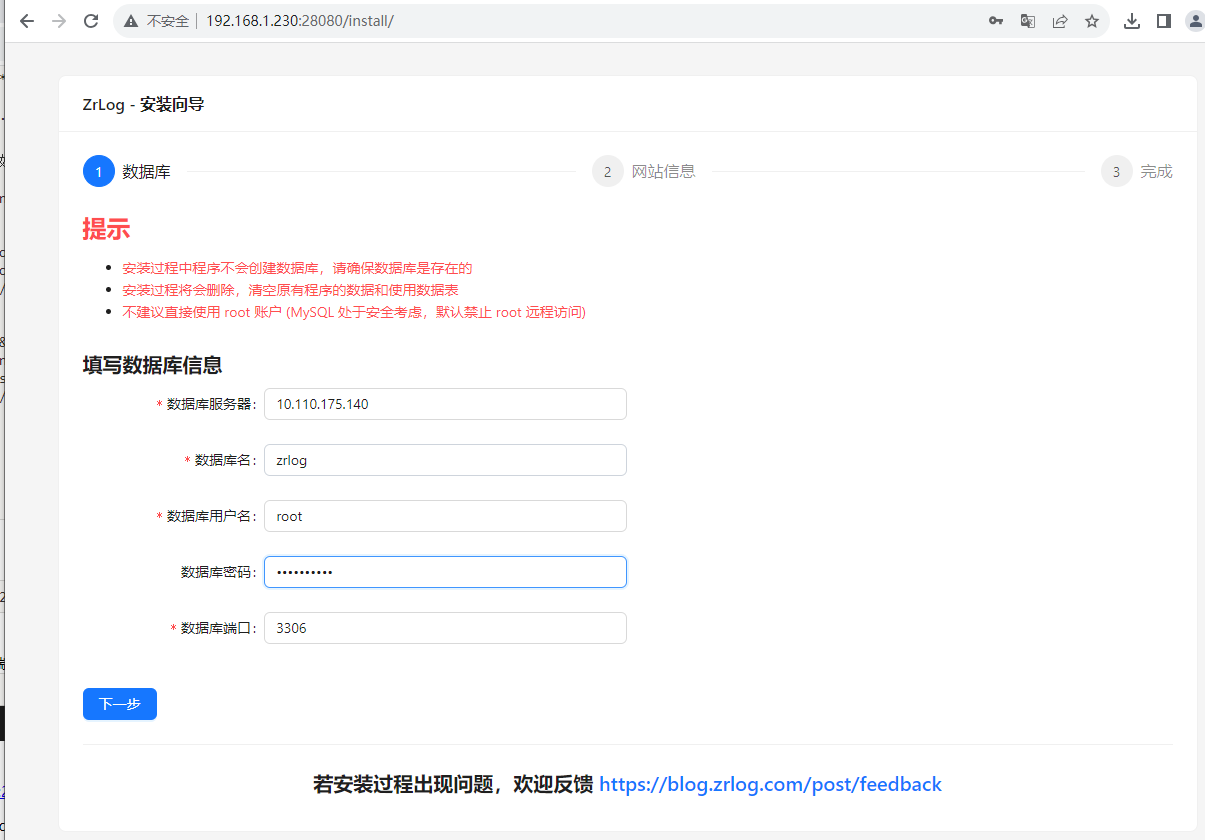

eee130a11c58 zrlog "/run.sh" 3 seconds ago Up 2 seconds 0.0.0.0:28080->8080/tcp happy_brown访问zrlog:http://192.168.1.230:28080/install/

5.1.6 使用helm安装mysql

# helm search repo mysql

NAME CHART VERSION APP VERSION DESCRIPTION

aliyun/mysql 0.3.5 Fast, reliable, scalable, and easy to use open-...

bitnami/mysql 9.14.1 8.0.35 MySQL is a fast, reliable, scalable, and easy t...

prometheus-community/prometheus-mysql-exporter 2.1.0 v0.15.0 A Helm chart for prometheus mysql exporter with...

aliyun/percona 0.3.0 free, fully compatible, enhanced, open source d...

aliyun/percona-xtradb-cluster 0.0.2 5.7.19 free, fully compatible, enhanced, open source d...

bitnami/phpmyadmin 13.0.0 5.2.1 phpMyAdmin is a free software tool written in P...

aliyun/gcloud-sqlproxy 0.2.3 Google Cloud SQL Proxy

aliyun/mariadb 2.1.6 10.1.31 Fast, reliable, scalable, and easy to use open-...

bitnami/mariadb 14.1.0 11.1.2 MariaDB is an open source, community-developed ...

bitnami/mariadb-galera 10.0.3 11.1.2 MariaDB Galera is a multi-primary database clus...

[root@master-1-230 13.3]# helm pull --untar bitnami/mysql

[root@master-1-230 13.3]# cd mysql/

[root@master-1-230 13.3]#vi values.yaml

定义 storageClass: "nfs-client"

[root@master-1-230 mysql]# helm install zrlog-mysql .

NAME: zrlog-mysql

LAST DEPLOYED: Sun Nov 5 20:57:03 2023

NAMESPACE: default

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

CHART NAME: mysql

CHART VERSION: 9.14.1

APP VERSION: 8.0.35

** Please be patient while the chart is being deployed **

Tip:

Watch the deployment status using the command: kubectl get pods -w --namespace default

Services:

echo Primary: zrlog-mysql.default.svc.cluster.local:3306

Execute the following to get the administrator credentials:

echo Username: root

MYSQL_ROOT_PASSWORD=$(kubectl get secret --namespace default zrlog-mysql -o jsonpath="{.data.mysql-root-password}" | base64 -d)

To connect to your database:

1. Run a pod that you can use as a client:

kubectl run zrlog-mysql-client --rm --tty -i --restart='Never' --image docker.io/bitnami/mysql:8.0.35-debian-11-r0 --namespace default --env MYSQL_ROOT_PASSWORD=$MYSQL_ROOT_PASSWORD --command -- bash

2. To connect to primary service (read/write):

mysql -h zrlog-mysql.default.svc.cluster.local -uroot -p"$MYSQL_ROOT_PASSWORD"

[root@master-1-230 mysql]# kubectl get pod -o wide|grep mysql

zrlog-mysql-0 0/1 Running 0 107s 10.244.154.8 node-1-233 <none> <none>

[root@master-1-230 mysql]# kubectl get svc |grep mysql

zrlog-mysql ClusterIP 10.110.175.140 <none> 3306/TCP 5m36s

zrlog-mysql-headless ClusterIP None <none> 3306/TCP 5m36s

[root@master-1-230 mysql]#

[root@master-1-230 mysql]#

[root@master-1-230 mysql]# MYSQL_ROOT_PASSWORD=$(kubectl get secret --namespace default zrlog-mysql -o jsonpath="{.data.mysql-root-password}" | base64 -d)

[root@master-1-230 mysql]# echo $MYSQL_ROOT_PASSWORD

64T2LBgJZg

[root@master-1-230 mysql]# kubectl run zrlog-mysql-client --rm --tty -i --restart='Never' --image docker.io/bitnami/mysql:8.0.35-debian-11-r0 --namespace default --env MYSQL_ROOT_PASSWORD=$MYSQL_ROOT_PASSWORD --command -- bash

If you don't see a command prompt, try pressing enter.

I have no name!@zrlog-mysql-client:/$ mysql -h zrlog-mysql.default.svc.cluster.local -uroot -p"$MYSQL_ROOT_PASSWORD"

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 27

Server version: 8.0.35 Source distribution

Copyright (c) 2000, 2023, Oracle and/or its affiliates.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> create database zrlog;

Query OK, 1 row affected (0.01 sec)

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| my_database |

| mysql |

| performance_schema |

| sys |

| zrlog |

+--------------------+

6 rows in set (0.03 sec)

mysql>

5.1.7 访问skywalking ui

http://192.168.1.230:8083/general