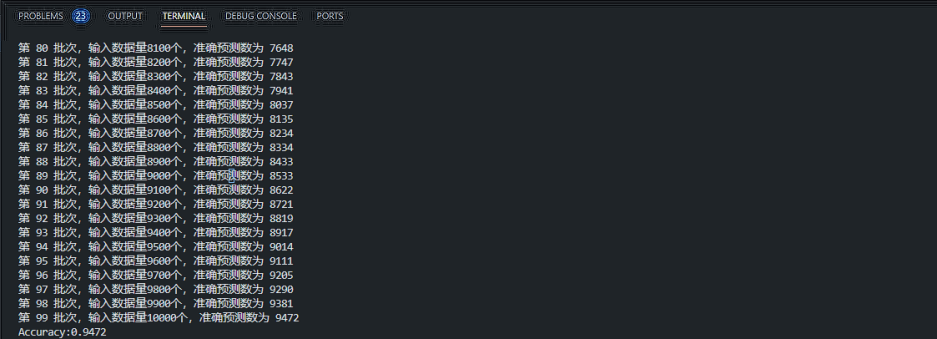

神经网络模型流程

神经网络模型的搭建流程,整理下自己的思路,这个过程不会细分出来,而是主流程。

在这里我主要是把整个流程分为两个主流程,即预训练与推理。预训练过程主要是生成超参数文件与搭设神经网络结构;而推理过程就是在应用超参数与神经网络。

卷积神经网络的实现

在 聊聊卷积神经网络CNN中,将卷积神经的理论概述了一下,现在要大概的实践了。整个代码不基于pytorch/tensorflow这类大框架,而是基于numpy库原生来实现算法。pytorch/tensorflow中的算子/函数只是由别人已实现了,我们调用而已;而基于numpy要自己实现一遍,虽然并不很严谨,但用于学习足以。源代码是来自《深度学习入门:基于Python的理论与实现》,可以在 https://www.ituring.com.cn/book/1921 上获取下载

搭建CNN

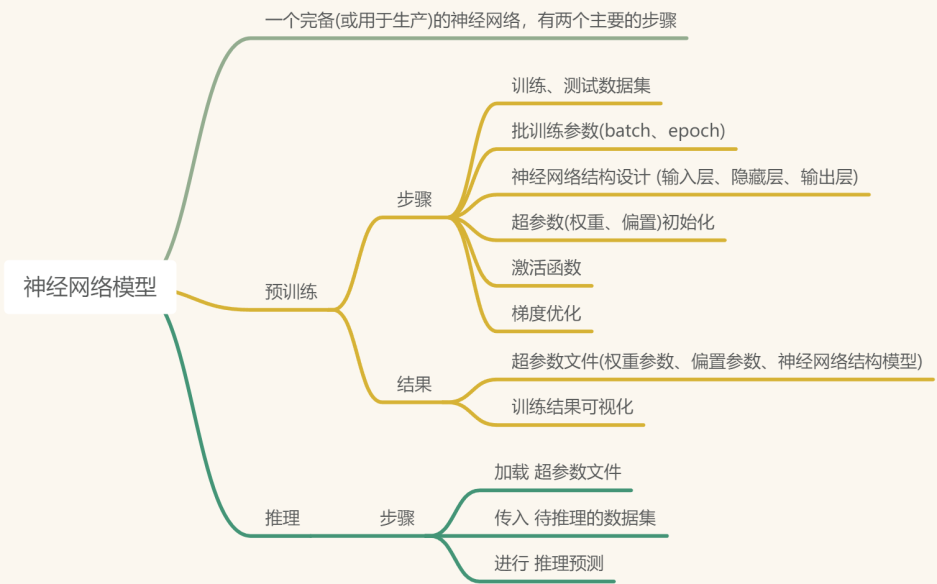

网络构成如下:

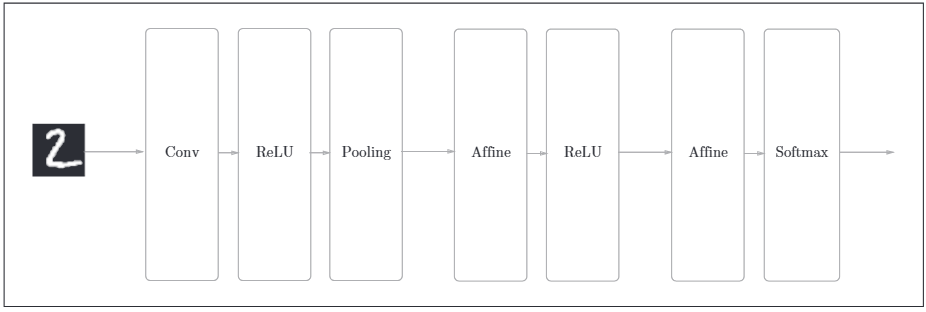

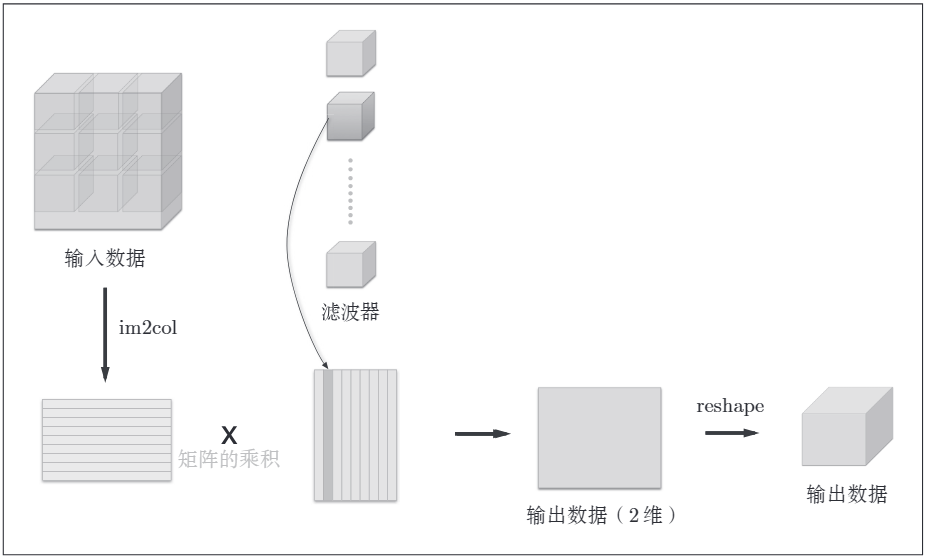

如图所示,网络的构成是"Conv-ReLU-Pooling-Affine-ReLU-Affine-Softmax". 对于卷积层与池化层的计算,由于其是四维数据(数据量,通道,高,长),不太好计算,使用im2col函数将其展开成二维 2 × 2的数据,最后输出时,利用numpy库的reshape函数转换输出的大小,方便计算。其示意图如下:

这样也满足了矩阵内积计算的要求,即 行列数要对应

CNN程序代码实现如下:

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import pickle

import numpy as np

from collections import OrderedDict

from DeepLearn_Base.common.layers import *

from DeepLearn_Base.common.gradient import numerical_gradient

class SimpleConvNet:

"""简单的ConvNet

conv - relu - pool - affine - relu - affine - softmax

Parameters

----------

input_dim: 输入数据的维度,通道、高、长

conv_param: 卷积核参数; filter_num:卷积核数量; filter_size:卷积核大小; stride:步幅; pad:填充

input_size : 输入大小(MNIST的情况下为784)

hidden_size_list : 隐藏层的神经元数量的列表(e.g. [100, 100, 100])

output_size : 输出大小(MNIST的情况下为10)

activation : 'relu' or 'sigmoid'

weight_init_std : 指定权重的标准差(e.g. 0.01)

指定'relu'或'he'的情况下设定“He的初始值”

指定'sigmoid'或'xavier'的情况下设定“Xavier的初始值”

"""

def __init__(self, input_dim=(1, 28, 28),

conv_param={'filter_num':30, 'filter_size':5, 'pad':0, 'stride':1},

hidden_size=100, output_size=10, weight_init_std=0.01):

filter_num = conv_param['filter_num']

filter_size = conv_param['filter_size']

filter_pad = conv_param['pad']

filter_stride = conv_param['stride']

input_size = input_dim[1]

conv_output_size = (input_size - filter_size + 2*filter_pad) / filter_stride + 1

pool_output_size = int(filter_num * (conv_output_size/2) * (conv_output_size/2))

# 初始化权重

self.params = {}

self.params['W1'] = weight_init_std * \

np.random.randn(filter_num, input_dim[0], filter_size, filter_size)

self.params['b1'] = np.zeros(filter_num)

self.params['W2'] = weight_init_std * \

np.random.randn(pool_output_size, hidden_size)

self.params['b2'] = np.zeros(hidden_size)

self.params['W3'] = weight_init_std * \

np.random.randn(hidden_size, output_size)

self.params['b3'] = np.zeros(output_size)

# 生成层

self.layers = OrderedDict()

self.layers['Conv1'] = Convolution(self.params['W1'], self.params['b1'],

conv_param['stride'], conv_param['pad'])

self.layers['Relu1'] = Relu()

self.layers['Pool1'] = Pooling(pool_h=2, pool_w=2, stride=2)

self.layers['Affine1'] = Affine(self.params['W2'], self.params['b2'])

self.layers['Relu2'] = Relu()

self.layers['Affine2'] = Affine(self.params['W3'], self.params['b3'])

self.last_layer = SoftmaxWithLoss()

# 需要处理数据,将输入数据的多维与卷积核的多维分别展平后做矩阵运算

# 在神经网络的中间层(conv,relu,pooling,affine等)的forward函数中用到了img2col与reshape结合展平数据,用向量内积运算

def predict(self, x):

for layer in self.layers.values():

x = layer.forward(x)

return x

def loss(self, x, t):

"""求损失函数

参数x是输入数据、t是教师标签

"""

y = self.predict(x)

return self.last_layer.forward(y, t)

# 计算精确度

def accuracy(self, x, t, batch_size=100):

if t.ndim != 1 : t = np.argmax(t, axis=1)

acc = 0.0

for i in range(int(x.shape[0] / batch_size)):

tx = x[i*batch_size:(i+1)*batch_size]

tt = t[i*batch_size:(i+1)*batch_size]

y = self.predict(tx)

y = np.argmax(y, axis=1)

acc += np.sum(y == tt)

return acc / x.shape[0]

def numerical_gradient(self, x, t):

"""求梯度(数值微分)

Parameters

----------

x : 输入数据

t : 教师标签

Returns

-------

具有各层的梯度的字典变量

grads['W1']、grads['W2']、...是各层的权重

grads['b1']、grads['b2']、...是各层的偏置

"""

loss_w = lambda w: self.loss(x, t)

grads = {}

for idx in (1, 2, 3):

grads['W' + str(idx)] = numerical_gradient(loss_w, self.params['W' + str(idx)])

grads['b' + str(idx)] = numerical_gradient(loss_w, self.params['b' + str(idx)])

return grads

def gradient(self, x, t):

"""求梯度(误差反向传播法)

Parameters

----------

x : 输入数据

t : 教师标签

Returns

-------

具有各层的梯度的字典变量

grads['W1']、grads['W2']、...是各层的权重

grads['b1']、grads['b2']、...是各层的偏置

"""

# forward

self.loss(x, t)

# backward

dout = 1

dout = self.last_layer.backward(dout)

layers = list(self.layers.values())

layers.reverse()

for layer in layers:

dout = layer.backward(dout)

# 设定

grads = {}

grads['W1'], grads['b1'] = self.layers['Conv1'].dW, self.layers['Conv1'].db

grads['W2'], grads['b2'] = self.layers['Affine1'].dW, self.layers['Affine1'].db

grads['W3'], grads['b3'] = self.layers['Affine2'].dW, self.layers['Affine2'].db

return grads

def save_params(self, file_name="params.pkl"):

params = {}

for key, val in self.params.items():

params[key] = val

with open(file_name, 'wb') as f:

pickle.dump(params, f)

def load_params(self, file_name="params.pkl"):

with open(file_name, 'rb') as f:

params = pickle.load(f)

for key, val in params.items():

self.params[key] = val

for i, key in enumerate(['Conv1', 'Affine1', 'Affine2']):

self.layers[key].W = self.params['W' + str(i+1)]

self.layers[key].b = self.params['b' + str(i+1)]

激活函数与卷积函数的实现代码没有详细的写出来,可以自己去下载查看

在这整个的过程中,我个人觉得最难的就是神经网络层的搭建与数据的计算。前者决定了神经网络的结构,而后者决定了是否最终结果。通过将数据展平,才能方便,正确的进行向量内积计算。

预训练

trainer.py文件是进行神经网络训练的类,会统计执行完一个epoch后的精确度,过程要选择梯度更新算法,学习率,批大小,epoch次数等参数。

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import numpy as np

from DeepLearn_Base.common.optimizer import *

class Trainer:

"""进行神经网络的训练的类

epochs: 以所有数据走完前向、后向传播为一次;该数值表示为总次数

mini_batch_size: 100; 每批次迭代多少数据

evaluate_sample_num_per_epoch: 1000;

"""

def __init__(self, network, x_train, t_train, x_test, t_test,

epochs=20, mini_batch_size=100,

optimizer='SGD', optimizer_param={'lr':0.01},

evaluate_sample_num_per_epoch=None, verbose=True):

self.network = network

self.verbose = verbose

self.x_train = x_train

self.t_train = t_train

self.x_test = x_test

self.t_test = t_test

self.epochs = epochs

self.batch_size = mini_batch_size

self.evaluate_sample_num_per_epoch = evaluate_sample_num_per_epoch

# optimzer: 梯度更新优化器; 更新多种梯度更新算法实现梯度更新.

optimizer_class_dict = {'sgd':SGD, 'momentum':Momentum, 'nesterov':Nesterov,

'adagrad':AdaGrad, 'rmsprpo':RMSprop, 'adam':Adam}

self.optimizer = optimizer_class_dict[optimizer.lower()](**optimizer_param)

self.train_size = x_train.shape[0]

self.iter_per_epoch = max(self.train_size / mini_batch_size, 1)

self.max_iter = int(epochs * self.iter_per_epoch)

self.current_iter = 0

self.current_epoch = 0

self.train_loss_list = []

self.train_acc_list = []

self.test_acc_list = []

def train_step(self):

# 随机挑选批次的数据进行梯度更新

batch_mask = np.random.choice(self.train_size, self.batch_size)

x_batch = self.x_train[batch_mask]

t_batch = self.t_train[batch_mask]

# 开始更新梯度

grads = self.network.gradient(x_batch, t_batch)

self.optimizer.update(self.network.params, grads)

# 计算损失

loss = self.network.loss(x_batch, t_batch)

self.train_loss_list.append(loss)

if self.verbose: print("train loss:" + str(loss))

# 计算是否完成了一个epoch的执行

if self.current_iter % self.iter_per_epoch == 0:

self.current_epoch += 1

x_train_sample, t_train_sample = self.x_train, self.t_train

x_test_sample, t_test_sample = self.x_test, self.t_test

if not self.evaluate_sample_num_per_epoch is None:

t = self.evaluate_sample_num_per_epoch

x_train_sample, t_train_sample = self.x_train[:t], self.t_train[:t]

x_test_sample, t_test_sample = self.x_test[:t], self.t_test[:t]

train_acc = self.network.accuracy(x_train_sample, t_train_sample)

test_acc = self.network.accuracy(x_test_sample, t_test_sample)

self.train_acc_list.append(train_acc)

self.test_acc_list.append(test_acc)

if self.verbose: print("=== epoch:" + str(self.current_epoch) + ", train acc:" + str(train_acc) + ", test acc:" + str(test_acc) + " ===")

self.current_iter += 1

def train(self):

for i in range(self.max_iter):

self.train_step()

test_acc = self.network.accuracy(self.x_test, self.t_test)

if self.verbose:

print("=============== Final Test Accuracy ===============")

print("test acc:" + str(test_acc))

在神经网络训练中,epoch参数是指将整个训练集通过模型一次,并更新模型参数的过程。每一次epoch,模型都会将训练集中的所有样本通过一次,并根据这些样本的标签和模型预测的结果计算损失值,然后根据损失值对模型的参数进行更新。这个过程会重复进行,直到达到预设的epoch数。

正式开始预训练,要准备好训练数据集,初始化CNN,梯度优化参数,超参数存储路径等。如下所示:

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import numpy as np

import matplotlib.pyplot as plt

from DeepLearn_Base.dataset.mnist import load_mnist

from simple_convnet import SimpleConvNet

from DeepLearn_Base.common.trainer import Trainer

# 读入数据

# 输入数据的表现形式,可以是多维的,可以是展平(reshape)为一维的

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

# 处理花费时间较长的情况下减少数据,截取部分数据

# 训练数据截取 5000 条

# 测试数据截取 1000 条

x_train, t_train = x_train[:5000], t_train[:5000]

x_test, t_test = x_test[:1000], t_test[:1000]

# 初始化epoch

max_epochs = 20

# 初始化CNN

# input_dim, 输入数据: channel, height, width

# conv_param, 卷积核参数: filter_num:卷积核数量; filter_size:卷积核大小; stride:步幅; pad:填充; 30个5 × 5,通道为1的卷积核

network = SimpleConvNet(input_dim=(1,28,28),

conv_param = {'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

# 初始化预训练

# optimizer: 梯度优化算法; lr表示学习率

trainer = Trainer(network, x_train, t_train, x_test, t_test,

epochs=max_epochs, mini_batch_size=100,

optimizer='Adam', optimizer_param={'lr': 0.001},

evaluate_sample_num_per_epoch=1000)

trainer.train()

# 保存参数

network.save_params("E:\\workcode\\code\\DeepLearn_Base\\ch07\\cnn_params.pkl")

print("Saved Network Parameters!")

# 绘制图形

markers = {'train': 'o', 'test': 's'}

x = np.arange(max_epochs)

plt.plot(x, trainer.train_acc_list, marker='o', label='train', markevery=2)

plt.plot(x, trainer.test_acc_list, marker='s', label='test', markevery=2)

plt.xlabel("epochs")

plt.ylabel("accuracy")

plt.ylim(0, 1.0)

plt.legend(loc='lower right')

plt.show()

预训练好后,查看是否生成超参数文件。

推理

准备好测试数据集,应用已预训练好的神经网络模型与超参数。

# coding: utf-8

import sys, os

# 为了导入父目录的文件而进行的设定

sys.path.append(os.pardir)

import numpy as np

from DeepLearn_Base.dataset.mnist import load_mnist

from DeepLearn_Base.common.functions import sigmoid, softmax

from simple_convnet import SimpleConvNet

def get_data():

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

return x_test, t_test

# 下载mnist数据集

# 分别下载测试图像包、测试标签包、训练图像包、训练标签包

x, t = get_data()

conv = SimpleConvNet()

# 获取预训练好的权重与偏置参数

conv.load_params("E:\\workcode\\code\\DeepLearn_Base\\ch07\\cnn_params.pkl")

# 初始化

batch_size = 100

accuracy_cnt = 0

for i in range(int(x.shape[0] / batch_size)):

# 批次取数据

x_batch = x[i * batch_size : (i+1) * batch_size]

tt = t[i * batch_size : (i+1) * batch_size]

# 执行推理

y_batch = conv.predict(x_batch)

p = np.argmax(y_batch, axis=1)

# 统计预测正确的数据

accuracy_cnt += np.sum(p == tt)

print(f'第 {i} 批次,输入数据量{(i+1) * batch_size}个,准确预测数为 {accuracy_cnt}')

print("Accuracy:" + str(float(accuracy_cnt) / x.shape[0]))

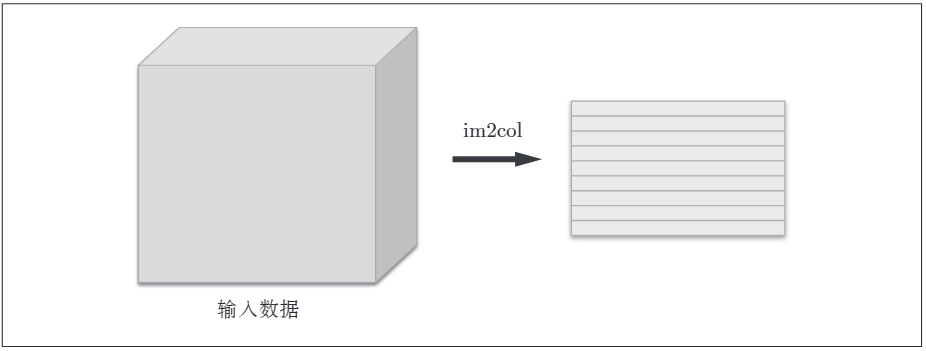

最后的输出如下: