It is because requires interprocess communication between the main process and the worker processes behind the scene, and the communication overhead took more (wall-clock) time than the "actual" computation () in your case.multiprocessingx * x

Try "heavier" computation kernel instead, like

def f(x):

return reduce(lambda a, b: math.log(a+b), xrange(10**5), x)

Update (clarification)

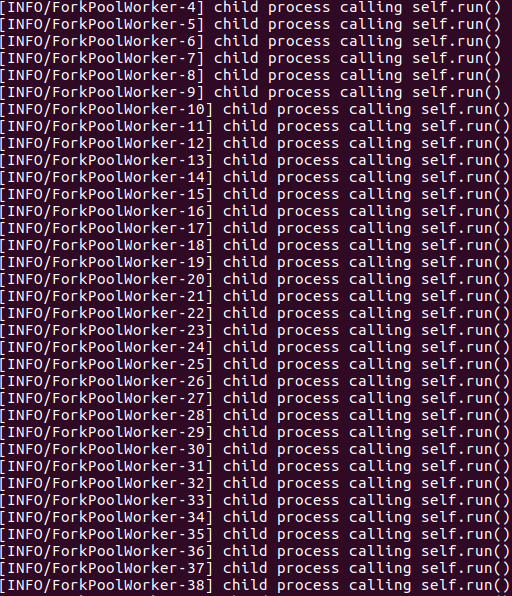

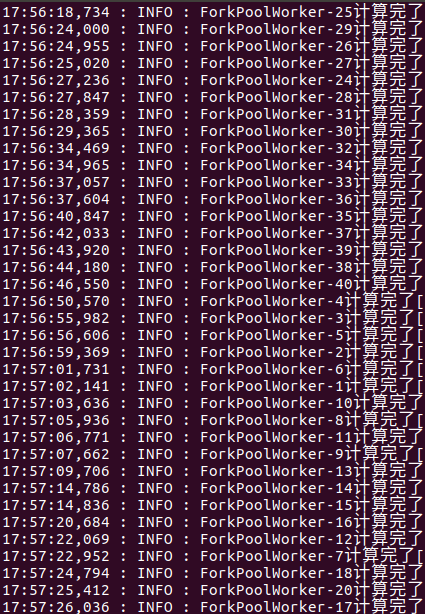

I pointed out that the low CPU usage observed by the OP was due to the IPC overhead inherent in but the OP didn't need to worry about it too much because the original computation kernel was way too "light" to be used as a benchmark. In other words, works the worst with such a way too "light" kernel. If the OP implements a real-world logic (which, I'm sure, will be somewhat "heavier" than ) on top of , the OP will achieve a decent efficiency, I assure. My argument is backed up by an experiment with the "heavy" kernel I presented.multiprocessingmultiprocessingx * xmultiprocessing

@FilipMalczak, I hope my clarification makes sense to you.

By the way there are some ways to improve the efficiency of while using . For example, we can combine 1,000 jobs into one before we submit it to unless we are required to solve each job in real time (ie. if you implement a REST API server, we shouldn't do in this way).x * xmultiprocessingPool

printmost of the time, so it is what is called "I/O bound".printstarts until themapfinishes. Synchronization overhead might account for some of the underutilization.