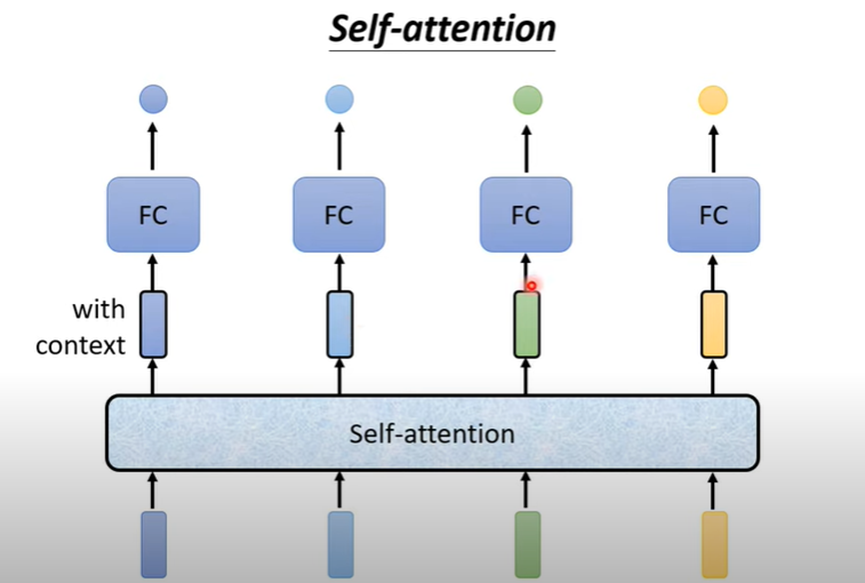

Self attention考虑了整个sequence的资讯【transfermer中重要的架构是self-attention】

解决sequence2sequence的问题,考虑前后文

I saw a saw 第一个saw对应输出动词 第二个输出名词

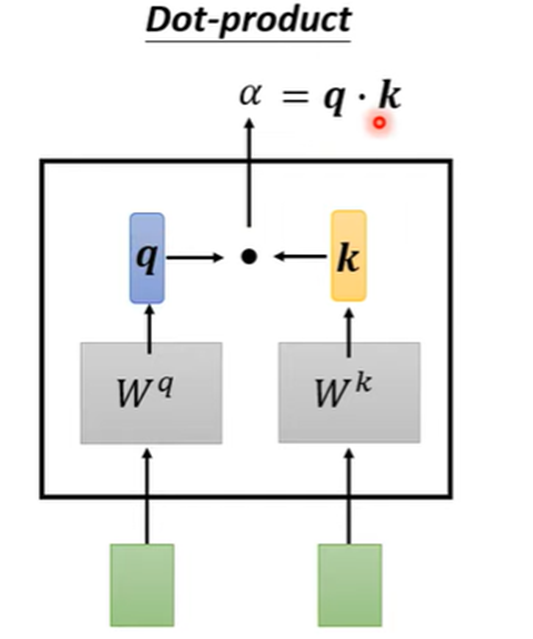

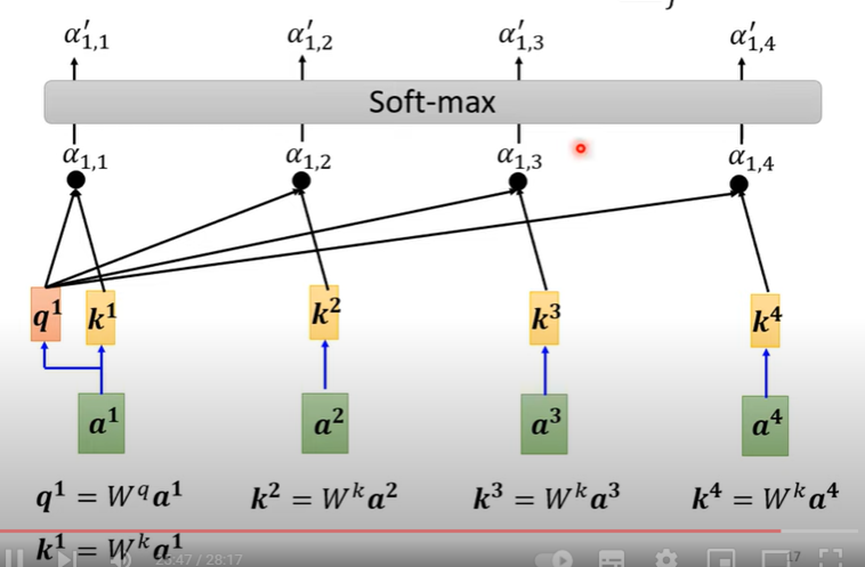

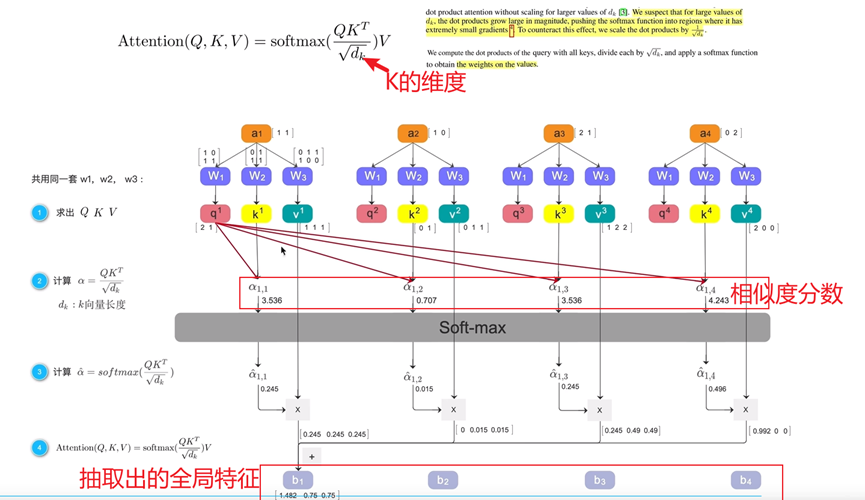

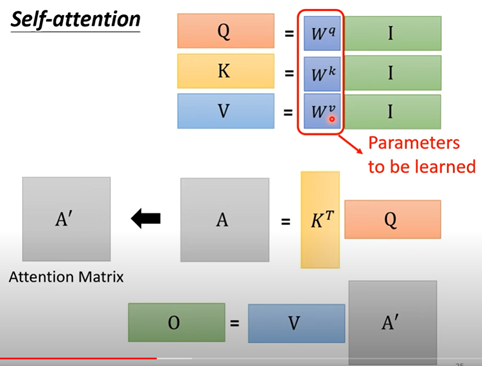

如何计算相关性【attention score】

输入的两个向量乘两个矩阵

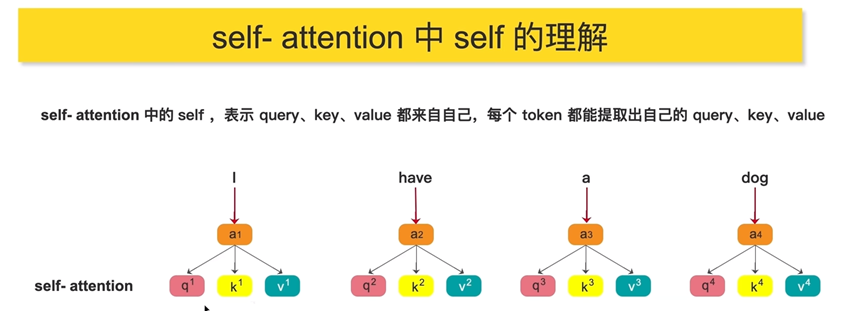

Q=query k=key

看a1,自己和自己也计算了关联性。【a1,1】

只需要学习三个矩阵的参数

- self-attention attention selfself-attention self-attentive self-attention attention self self-attentive interaction attentive automatic self-attention representation functional attention recommendation self-attention sequential stochastic self-attention local-global interactions transformers self-attention attention笔记self self-attention attention self 4.1 self-attention注意力attention机制