选择你想要的版本

镜像链接https://mirrors.aliyun.com/apache/spark/?spm=a2c6h.25603864.0.0.5d1b590eLwbWr2

sudo tar -zxvf spark-3.3.2-bin-without-hadoop.tgz -C /usr/local/

cd /usr/local/

sudo mv spark-3.3.2-bin-without-hadoop spark-3.3.2

chown -R bill:freedom spark-3.3.2

vi /etc/profile

#Spark3.3.2

export SPARK_HOME=/usr/local/spark-3.3.2

export PATH=$PATH:${SPARK_HOME}/binsource /etc/profile

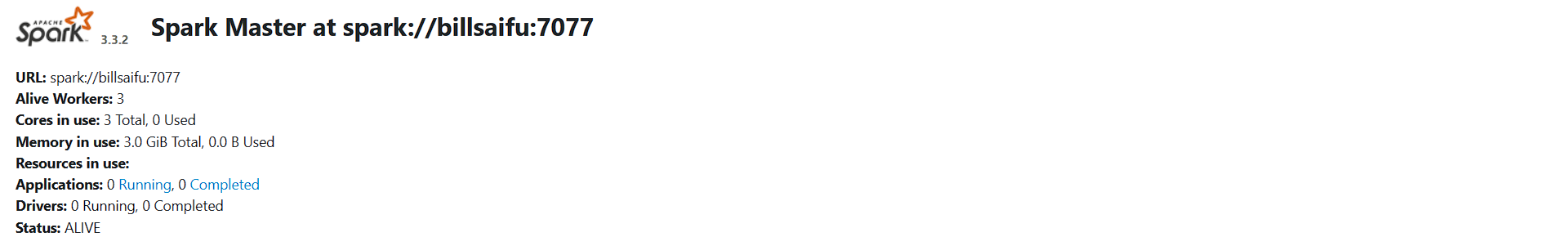

下面为spark的配置,我这里为高可用集群,其中billsaifu主机为主master,其余两个主机的matser为候选

workers

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# A Spark Worker will be started on each of the machines listed below.

billsaifu

hadoop1

hadoop2Spark-defaults.conf

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# Default system properties included when running spark-submit.

# This is useful for setting default environmental settings.

# Example:

# spark.master spark://master:7077

# spark.eventLog.enabled true

# spark.eventLog.dir hdfs://namenode:8021/directory

# spark.serializer org.apache.spark.serializer.KryoSerializer

# spark.driver.memory 5g

# spark.executor.extraJavaOptions -XX:+PrintGCDetails -Dkey=value -Dnumbers="one two three"

spark.eventLog.enabled true

spark.eventLog.dir hdfs://billsaifu:9000/spark/log

spark.yarn.historyServer.address http://billsaifu:19889/jobhistory/logs

spark.history.ui.port 18080spakr-env.sh

#!/usr/bin/env bash

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# This file is sourced when running various Spark programs.

# Copy it as spark-env.sh and edit that to configure Spark for your site.

# Options read when launching programs locally with

# ./bin/run-example or ./bin/spark-submit

# - HADOOP_CONF_DIR, to point Spark towards Hadoop configuration files

# - SPARK_LOCAL_IP, to set the IP address Spark binds to on this node

# - SPARK_PUBLIC_DNS, to set the public dns name of the driver program

# Options read by executors and drivers running inside the cluster

# - SPARK_LOCAL_IP, to set the IP address Spark binds to on this node

# - SPARK_PUBLIC_DNS, to set the public DNS name of the driver program

# - SPARK_LOCAL_DIRS, storage directories to use on this node for shuffle and RDD data

# - MESOS_NATIVE_JAVA_LIBRARY, to point to your libmesos.so if you use Mesos

# Options read in any mode

# - SPARK_CONF_DIR, Alternate conf dir. (Default: ${SPARK_HOME}/conf)

# - SPARK_EXECUTOR_CORES, Number of cores for the executors (Default: 1).

# - SPARK_EXECUTOR_MEMORY, Memory per Executor (e.g. 1000M, 2G) (Default: 1G)

# - SPARK_DRIVER_MEMORY, Memory for Driver (e.g. 1000M, 2G) (Default: 1G)

# Options read in any cluster manager using HDFS

# - HADOOP_CONF_DIR, to point Spark towards Hadoop configuration files

# Options read in YARN client/cluster mode

# - YARN_CONF_DIR, to point Spark towards YARN configuration files when you use YARN

# Options for the daemons used in the standalone deploy mode

# - SPARK_MASTER_HOST, to bind the master to a different IP address or hostname

# - SPARK_MASTER_PORT / SPARK_MASTER_WEBUI_PORT, to use non-default ports for the master

# - SPARK_MASTER_OPTS, to set config properties only for the master (e.g. "-Dx=y")

# - SPARK_WORKER_CORES, to set the number of cores to use on this machine

# - SPARK_WORKER_MEMORY, to set how much total memory workers have to give executors (e.g. 1000m, 2g)

# - SPARK_WORKER_PORT / SPARK_WORKER_WEBUI_PORT, to use non-default ports for the worker

# - SPARK_WORKER_DIR, to set the working directory of worker processes

# - SPARK_WORKER_OPTS, to set config properties only for the worker (e.g. "-Dx=y")

# - SPARK_DAEMON_MEMORY, to allocate to the master, worker and history server themselves (default: 1g).

# - SPARK_HISTORY_OPTS, to set config properties only for the history server (e.g. "-Dx=y")

# - SPARK_SHUFFLE_OPTS, to set config properties only for the external shuffle service (e.g. "-Dx=y")

# - SPARK_DAEMON_JAVA_OPTS, to set config properties for all daemons (e.g. "-Dx=y")

# - SPARK_DAEMON_CLASSPATH, to set the classpath for all daemons

# - SPARK_PUBLIC_DNS, to set the public dns name of the master or workers

# Options for launcher

# - SPARK_LAUNCHER_OPTS, to set config properties and Java options for the launcher (e.g. "-Dx=y")

# Generic options for the daemons used in the standalone deploy mode

# - SPARK_CONF_DIR Alternate conf dir. (Default: ${SPARK_HOME}/conf)

# - SPARK_LOG_DIR Where log files are stored. (Default: ${SPARK_HOME}/logs)

# - SPARK_LOG_MAX_FILES Max log files of Spark daemons can rotate to. Default is 5.

# - SPARK_PID_DIR Where the pid file is stored. (Default: /tmp)

# - SPARK_IDENT_STRING A string representing this instance of spark. (Default: $USER)

# - SPARK_NICENESS The scheduling priority for daemons. (Default: 0)

# - SPARK_NO_DAEMONIZE Run the proposed command in the foreground. It will not output a PID file.

# Options for native BLAS, like Intel MKL, OpenBLAS, and so on.

# You might get better performance to enable these options if using native BLAS (see SPARK-21305).

# - MKL_NUM_THREADS=1 Disable multi-threading of Intel MKL

# - OPENBLAS_NUM_THREADS=1 Disable multi-threading of OpenBLAS

export JAVA_HOME=/usr/local/java8/jdk1.8.0_371

#SPARK_MASTER_HOST=billsaifu

#SPARK_MASTER_PORT=7077

SPARK_WORKER_PORT=7079

#export HADOOP_HOME=/usr/local/ha/hadoop-3.3.5/

SPARK_MASTER_WEBUI_PORT=8989

SPARK_WORKER_WEBUI_PORT=8020

export HADOOP_HOME=/usr/local/hadoop-3.2.4

export HADOOP_CONF_DIR=/usr/local/hadoop-3.2.4/etc/hadoop

export YARN_CONF_DIR=/usr/local/hadoop-3.2.4/etc/hadoop

export SPARK_DAEMON_JAVA_OPTS="

-Dspark.deploy.recoveryMode=ZOOKEEPER

-Dspark.deploy.zookeeper.url=billsaifu,hadoop1,hadoop2

-Dspark.deploy.zookeeper.dir=/spark"

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop-3.2.4/bin/hadoop classpath)

export SPARK_HISTORY_OPTS="

-Dspark.history.ui.port=18080

-Dspark.history.fs.logDirectory=hdfs://billsaifu:9000/spark/log

-Dspark.history.retainedApplications=30"

"start_spark")

echo " =================== 启动 spark 集群 ==================="

echo " --------------- 启动 master和workers ---------------"

ssh billsaifu "/usr/local/spark-3.3.2/sbin/start-all.sh"

echo " --------------- 启动备用master ---------------"

ssh hadoop1 "/usr/local/spark-3.3.2/sbin/start-master.sh"

ssh hadoop2 "/usr/local/spark-3.3.2/sbin/start-master.sh"

echo " --------------- 启动 historyserver ---------------"

ssh billsaifu "/usr/local/spark-3.3.2/sbin/start-history-server.sh"

;;

"stop_spark")

echo " =================== 关闭 spark 集群 ==================="

echo " --------------- 关闭 historyserver ---------------"

ssh billsaifu "/usr/local/spark-3.3.2/sbin/stop-history-server.sh"

echo " --------------- 关闭备用master ---------------"

ssh hadoop1 "/usr/local/spark-3.3.2/sbin/stop-master.sh"

ssh hadoop2 "/usr/local/spark-3.3.2/sbin/stop-master.sh"

echo " --------------- 关闭 master和workers ---------------"

ssh billsaifu "/usr/local/spark-3.3.2/sbin/stop-all.sh"

;;开启Hadoop和zookeeper后打开spark